Algos Prep

※ 1. Abstract

This is a running document that hosts my learnings from doing algorithmic puzzles. The main objective here is to gain an intuition for different question types so that I can pattern-match easily. This will be helpful for both technical interviews and on the daily, where identifying common patterns helps find nifty solutions to problems.

We shall explore the breadth of problems by following some common question-sets and then we can surgically tackle areas that I find non-intuitive and get some depth gains .

Table of Contents

- 1. Abstract

- 2. Tackling the Basics: Neetcode 150

- 2.1. Goals, Context and Retro

- 2.2. Arrays & Hashing

- 2.2.1. [1] Contains Duplicate (217)

- 2.2.2. [2] Valid Anagram (242)

- 2.2.3. [3] Two Sum (1)

- 2.2.4. [4] Group Anagrams (49)

- 2.2.5. [5] Top K Frequent Elements (347)

- 2.2.6. [6] Encode and Decode Strings [neetcode ref] [ref

- 2.2.7. [7] Product of Array Except Self (238)

- 2.2.8. [8] Valid Sudoku (36)

- 2.2.9. [9] Longest Consecutive Sequence (128) med sequence accumulation

- 2.2.10. [Depth-1] Subarray Sum Equals K (560) prefix_sum

- 2.2.11. [exposure-1] Sort Vowels in a String (2785)

- 2.3. Two Pointers

- 2.4. Stack

- 2.4.1. General Notes

- 2.4.2. [15] Valid Parentheses (20)

- 2.4.3. [16] Min Stack (155)

- 2.4.4. [17] Evaluate Reverse Polish Notation (150)

- 2.4.5. [18] ⭐️ Generate Parentheses (22) med redo backtracking combinations

- 2.4.6. [19] Daily Temperatures (739) med redo monotonic_stack

- 2.4.7. [20] Car Fleet (853) merge_from_one_side grouping segmentation

- 2.4.8. [21] ⭐️Largest Rectangle in Histogram (84) hard redo monotonic_stack

- 2.4.9. [Depth Blind 1] Remove Duplicate Letters (316) failed greedy monotonic_stack

- 2.4.10. [Depth-Blind 2] 132 Pattern (456) failed subsequence stack

- 2.5. Binary Search

- 2.5.1. General Notes

- 2.5.2. [22] Binary Search (704)

- 2.5.3. [23] Search a 2D Matrix (74) custom_flattening

- 2.5.4. [24] Koko Eating Bananas (875)

- 2.5.5. [25] Find Minimum in Rotated Sorted Array (153) almost

- 2.5.6. [26] Time Based Key Value Store (981)

- 2.5.7. TODO [27] Median of two sorted arrays [4] redo hard binary_search median virtual_domain

- 2.5.8. [Depth 1] Capacity to Ship Packages within D Days (1011) left_boundary_finding binary_search

- 2.5.9. [Depth 2] Find Minimum in Rotated Sorted Array II (154) hard binary_search rotated_array

- 2.5.10. [Depth 3] Search in Sorted Array (33) med

- 2.5.11. [Exposure 1] First/Last Position of Element in Sorted Array (34)

- 2.5.12. [Exposure 2] Single Element in a Sorted Array (540)

- 2.6. Sliding Window

- 2.6.1. General Notes

- 2.6.2. [28] Best Time to Buy And Sell Stock (121) sliding_window converge_to_a_side kadane_light

- 2.6.3. [29] Longest Substring Without Repeating Characters (3) sliding_window

- 2.6.4. [30] Longest Repeating Character Replacement (424) redo

- 2.6.5. [31] Permutation in String (567)

- 2.6.6. [32] Minimum Window Substring (76) hard almost

- 2.6.7. [33] Sliding Window Maxiumum (239) hard monotonic_deque

- 2.6.8. [Depth-Blind 1] Maximum Number of Vowels in a Substring of Given Length (1456) sliding_window

- 2.6.9. [Depth-Blind 2] Replace the Substring for Balanced String (1234) failed almost character_counting

- 2.6.10. [Depth-Blind 3] Find all Anagrams in a String (438) char_counting sliding_window fixed_size

- 2.6.11. TODO [Depth-Blind 3] Subarrays with K different Integers (992) failed double_window

- 2.6.12. [Exposure 1] Number of People Aware of a Secret (2327)

- 2.7. Linked List

- 2.7.1. General Notes

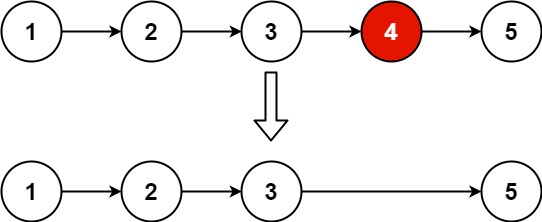

- 2.7.2. [34] Reverse Linked List (206)

- 2.7.3. [35] Merge Two Sorted Lists (21) dummy_node_method

- 2.7.4. [36] Linked List Cycle (141) tortoise_hare_method

- 2.7.5. [37] Reorder List (143) in_place split_reverse_merge_method

- 2.7.6. [38] Remove Nth Node from End of List (19) med dummy_node_method

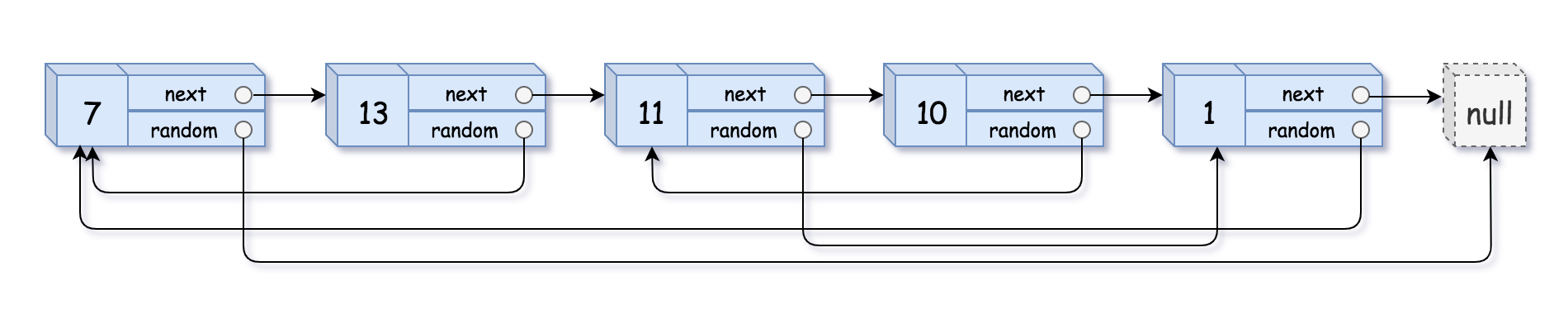

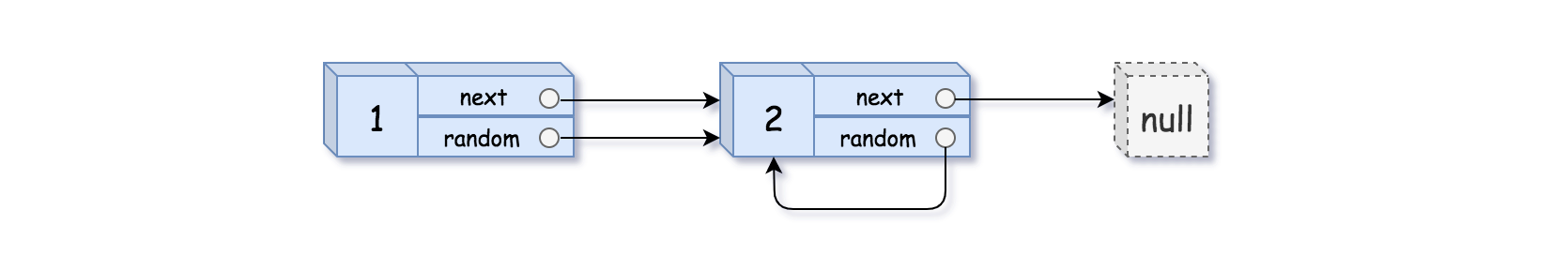

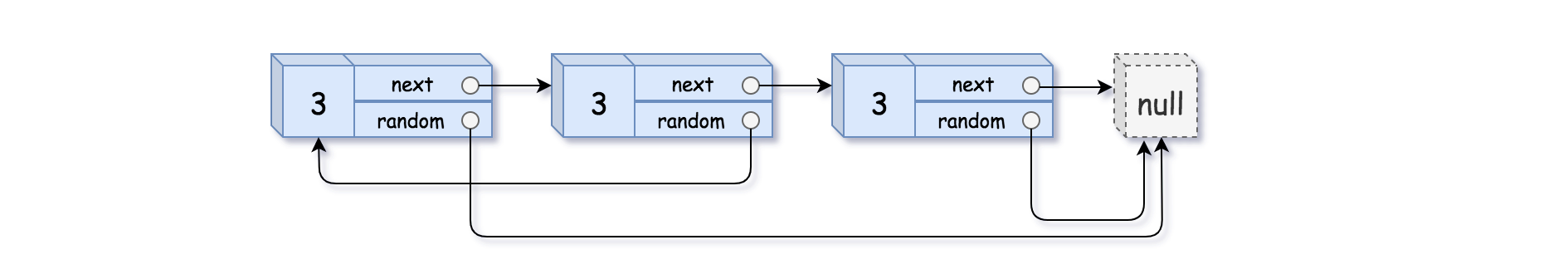

- 2.7.7. [39] Copy List with Random Pointer (138) interleaving_method

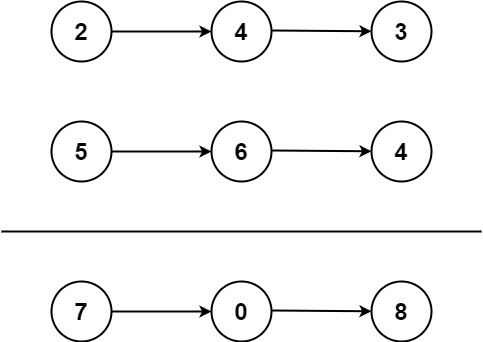

- 2.7.8. [40] Add two numbers (2) dummy_node_method human_calculation

- 2.7.9. [41] ⭐️ Find the Duplicate Number (287) redo tortoise_hare_method array linked_list

- 2.7.10. [42] LRU Cache (146)

- 2.7.11. [43] Merge k sorted lists [23] hard merge_sort_merge_method min_heap

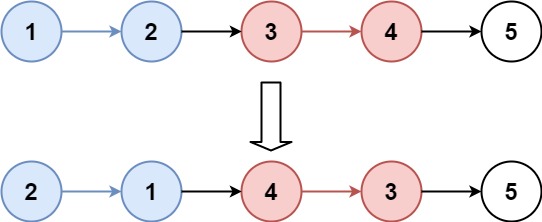

- 2.7.12. [44] Reverse nodes in k-Group (25) redo almost hard dummy_node_method sliding_window

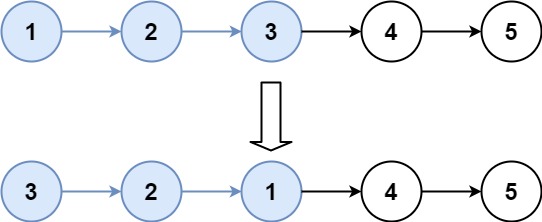

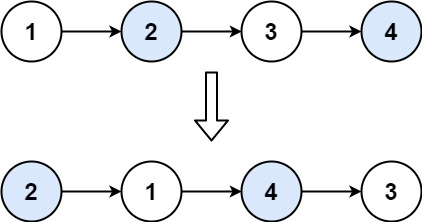

- 2.7.13. [Depth-1] Swap Nodes in Pairs (24)

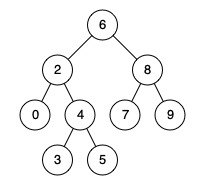

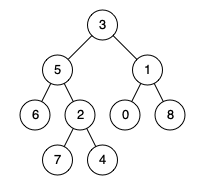

- 2.8. Trees

- 2.8.1. General Notes

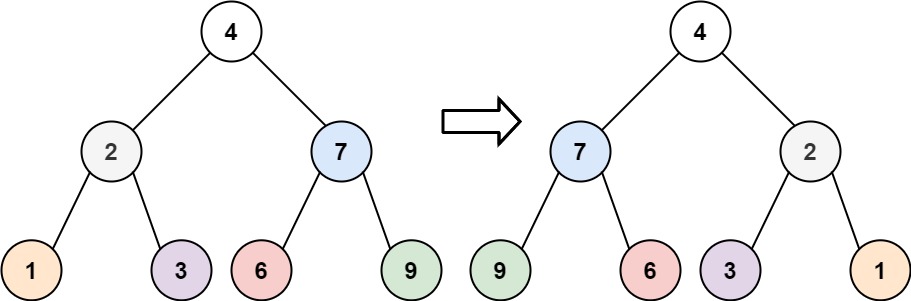

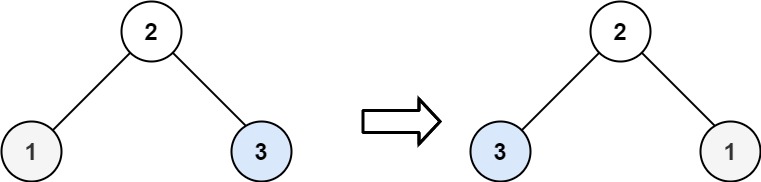

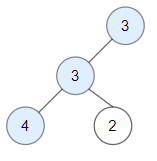

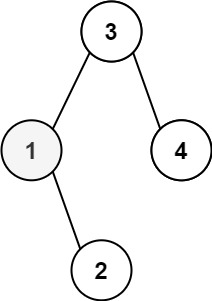

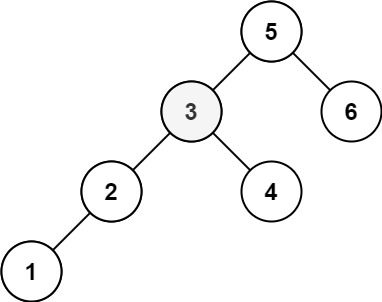

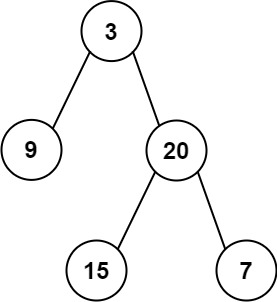

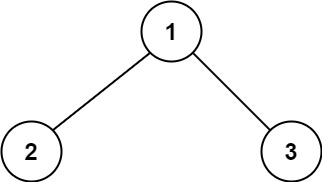

- 2.8.2. [45] Invert Binary Tree (226)

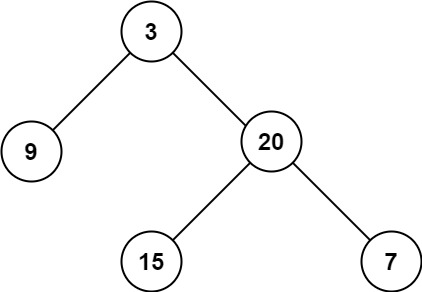

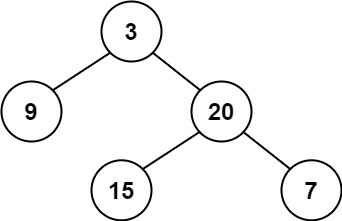

- 2.8.3. [46] Maximum Depth of Binary Tree (104)

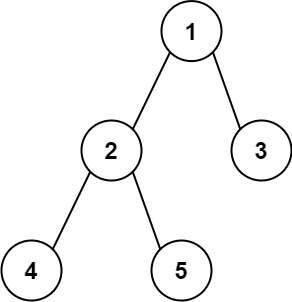

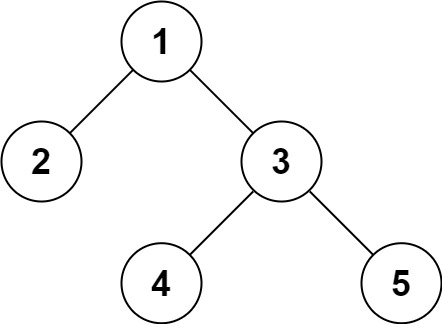

- 2.8.4. [47] Diameter of Binary Tree (543) redo post_order_dfs easy

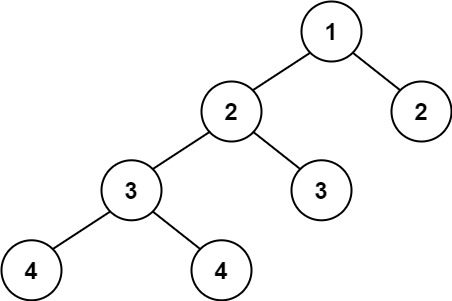

- 2.8.5. [48] Balanced Binary Tree (110) state_threading

- 2.8.6. [49] Same Tree (100)

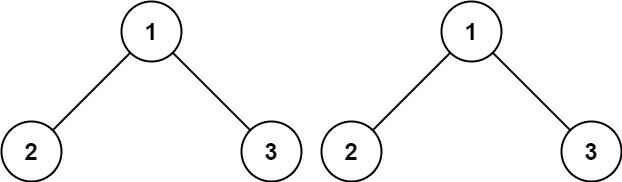

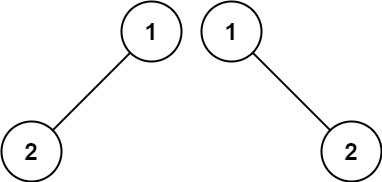

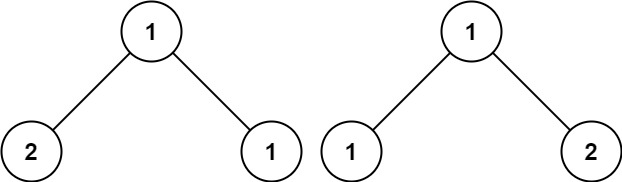

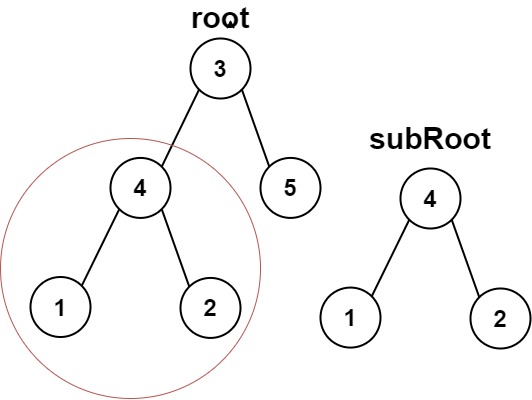

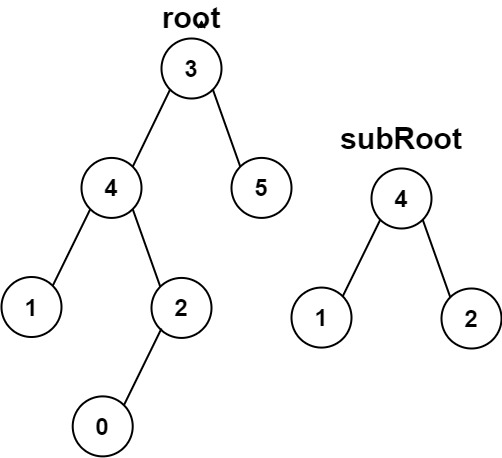

- 2.8.7. [50] Subtree of Another Tree (572) sameness

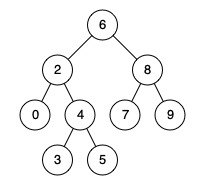

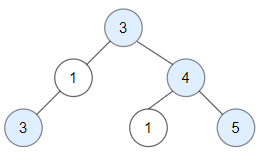

- 2.8.8. [51] Lowest Common Ancestor of a Binary Search Tree (235) BST

- 2.8.9. [52] Binary Tree Level Order Traversal (102)

- 2.8.10. [53] Binary Tree Right Side View (199) right_first_traversal

- 2.8.11. [54] Count Good Nodes in Binary Tree (1448)

- 2.8.12. [55] Validate Binary Search Tree (98) bst_property

- 2.8.13. [56] Kth Smallest Element in BST (230) augmentation

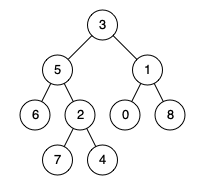

- 2.8.14. [57] ⭐️ Construct Binary Tree from Preorder and Inorder Traversal (105) redo traversal_properties

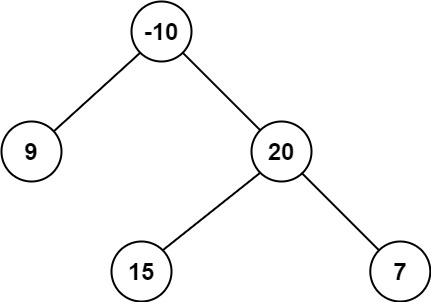

- 2.8.15. [58] Binary Tree Maximum Path Sum (124) redo hard gain_accumulation

- 2.8.16. TODO [59] Serialize and Deeserialize Binary Tree (297) redo hard backtracking trie

- 2.8.17. [Depth 1] Lowest Common Ancestor of a Binary Tree (236)

- 2.9. Tries

- 2.10. Backtracking

- 2.10.1. General Notes

- 2.10.2. [63] ⭐️ Subsets (78) redo power_set combinations no_duplicates_allowed

- 2.10.3. [64] Combination Sum (39) redo combination duplicates_allowed

- 2.10.4. [65] Combination Sum II (40) redo almost permutation pruning mental_model_clearer_now

- 2.10.5. [67] Permutations (46) permutation

- 2.10.6. [68] Subsets II (90) pruning combination

- 2.10.7. [69] Word Search (79) DFS

- 2.10.8. [70] Palindrome Partitioning (131) almost partitioning pruning

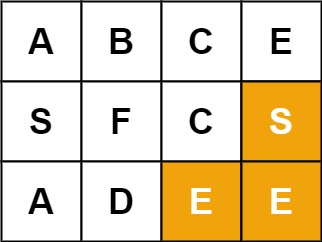

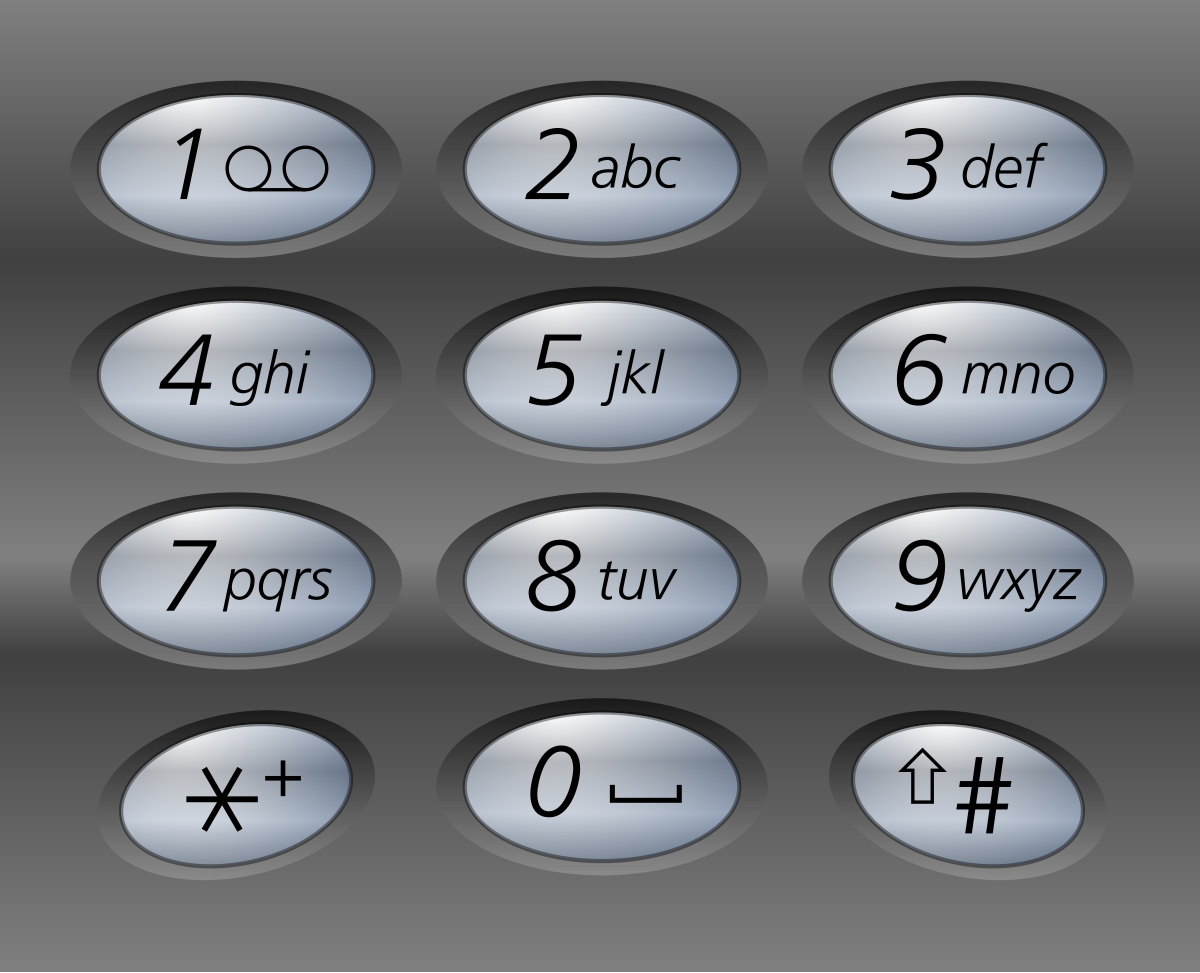

- 2.10.9. [71] Letter Combinations of a Phone Number (17)

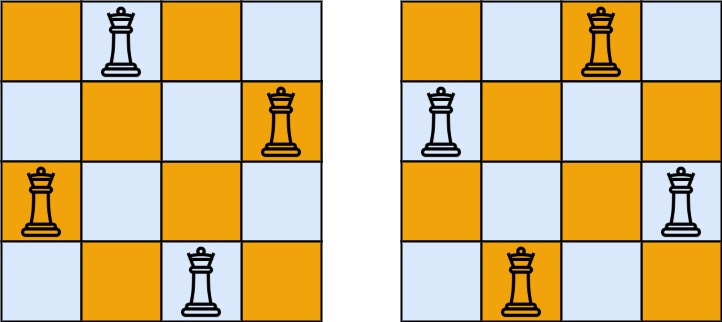

- 2.10.10. [72] ⭐️ N-Queens (51) hard almost redo pruning board matrix chess

- 2.10.11. [Depth-1] Sudoku Solver (37) hard sudoku dimension_flattening_index

- 2.11. Heap / Priority Queue

- 2.11.1. General Notes

- 2.11.2. [73] Kth Largest Element in a Stream (703)

- 2.11.3. [74] Last Stone Weight (1046)

- 2.11.4. [75] K Closest Points to Origin (973)

- 2.11.5. [76] ⭐️ Kth Largest Element in an Array (215) redo quick_select_algo

- 2.11.6. [77] Task Scheduler (621) almost redo greedy priority_queue

- 2.11.7. [78] Design Twitter (355) K_way_merge

- 2.11.8. [79] Find Median from Data Stream (295) hard double_heap_method

- 2.12. Graphs

- 2.12.1. General Notes

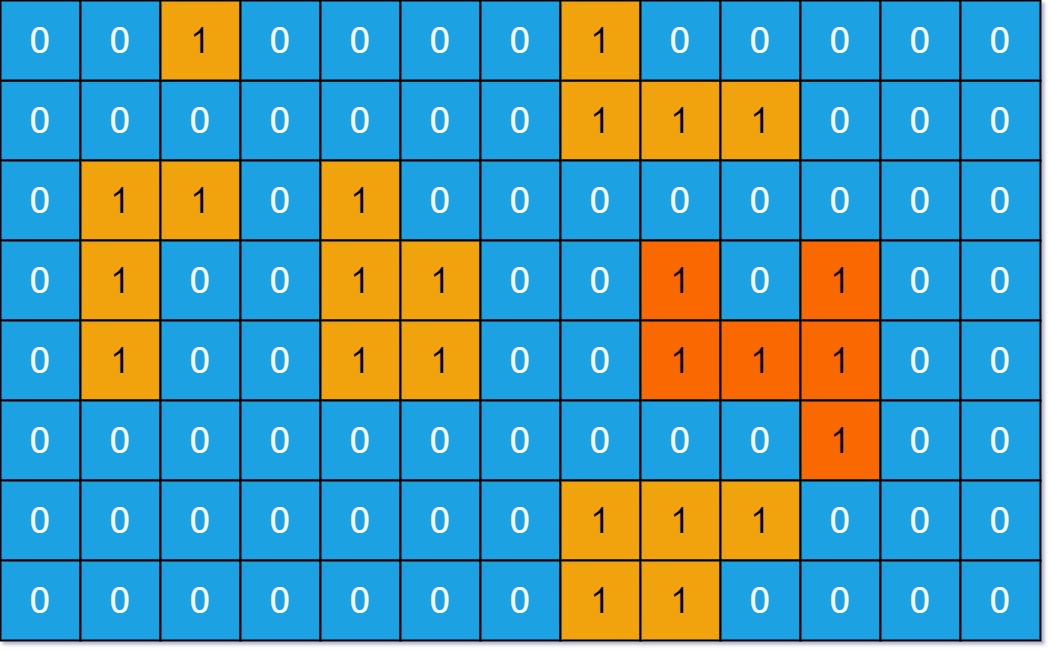

- 2.12.2. [80] Number of Islands (200) flood_fill reuse_input union_find

- 2.12.3. [81] Max Area of Island (695) flood_fill

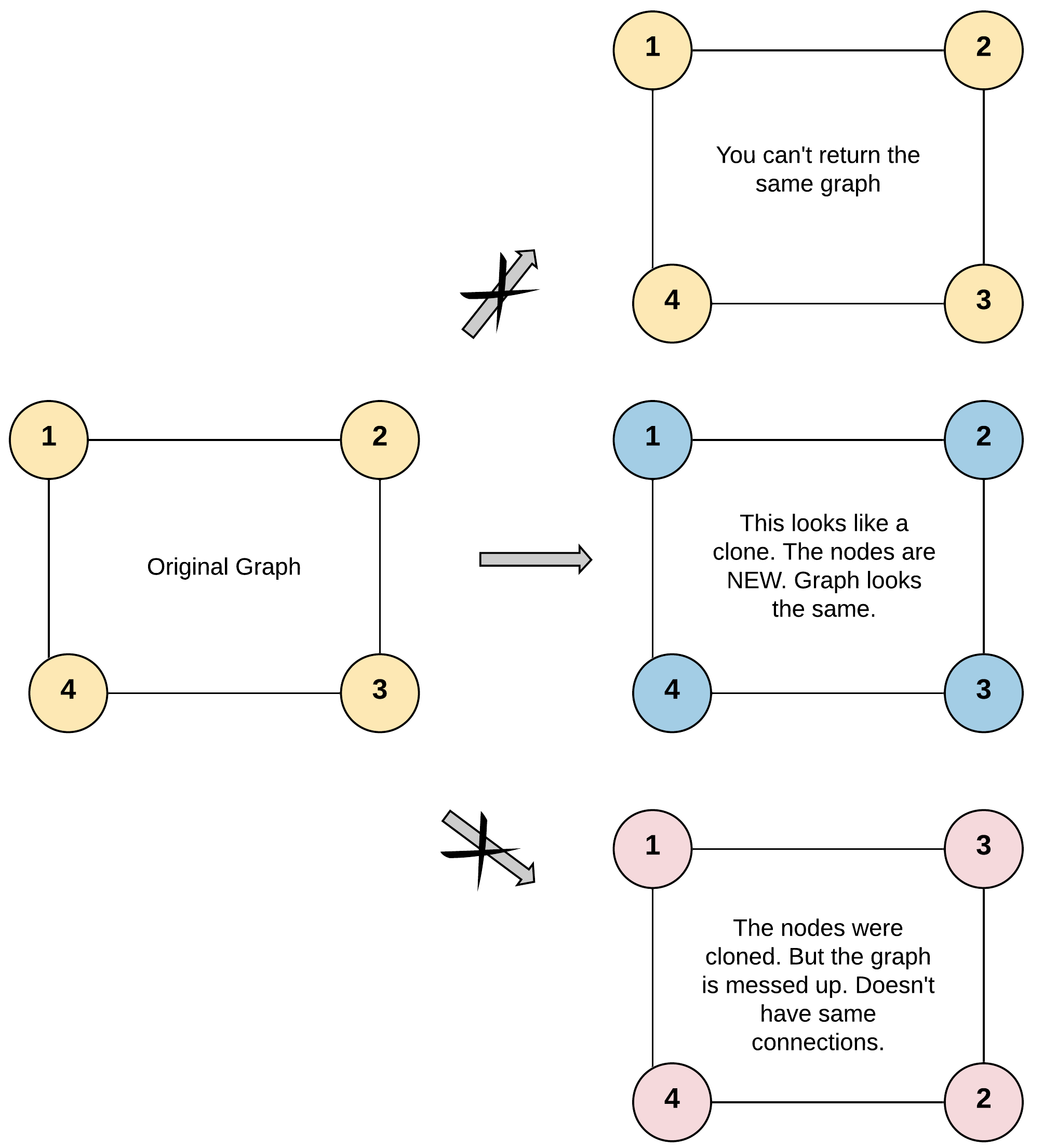

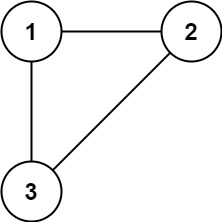

- 2.12.4. [82] Clone Graph (133) BFS undirected_graph almost

- 2.12.5. [83] Walls and Gates (??) multi_source_BFS

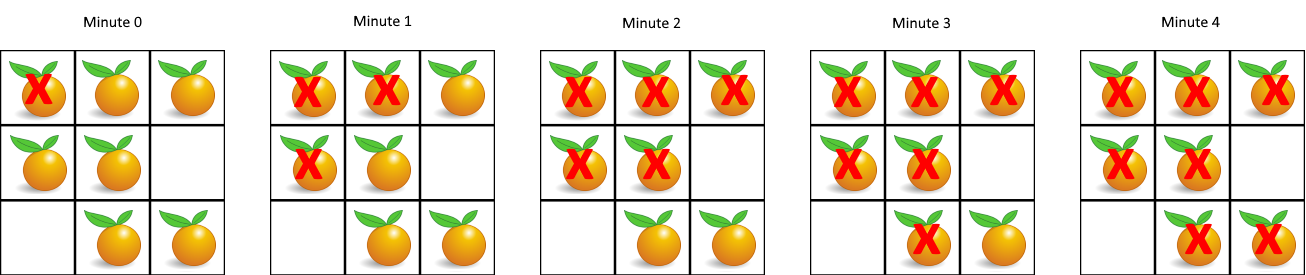

- 2.12.6. [84] Rotting Oranges (994) multi_source_BFS simulated_time_frontier

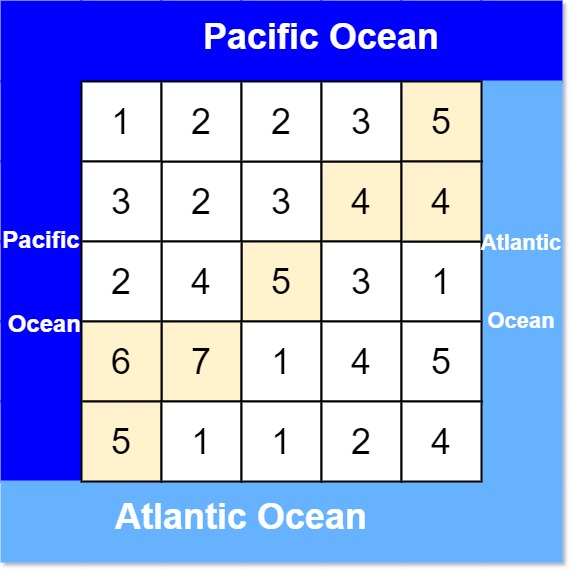

- 2.12.7. [85] ⭐️ Pacific Atlantic Water Flow (417) complement_approach flood_fill simulation border_to_center inverse_requirements reachability

- 2.12.8. [86] Surrounded Regions (130) bad_question complement_approach intermediate_marks border_to_center

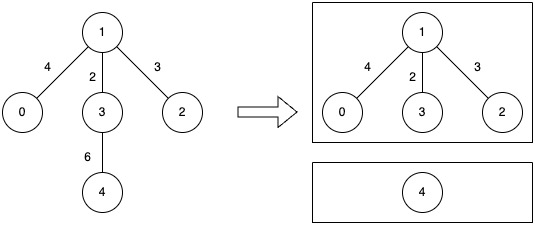

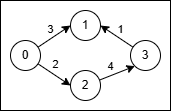

- 2.12.9. [87] Course Schedule I (207) redo 3_state_visited Kahns_algo topological_sort cycle_detection

- 2.12.10. [88] Course Schedule II (210) Kahn_algo topological_sort

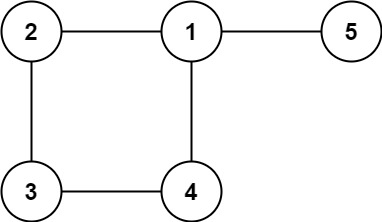

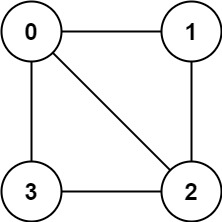

- 2.12.11. [89] Graph Valid Tree (??)

- 2.12.12. [90] Number of Connected Components in an Undirected Graph (323) union_find

- 2.12.13. [91] ⭐️ Redundant Connection (684) redo union_find cycle_finding incremental_processing

- 2.12.14. [92] Word Ladder (127) hard almost wildcard_pattern_matching

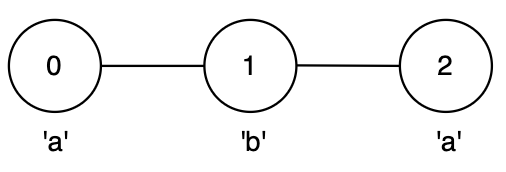

- 2.12.15. [D-1] Is Graph Bipartite (785) bipartite_graph

- 2.12.16. [D-2] Possible Bipartition (886) bipartite_graph

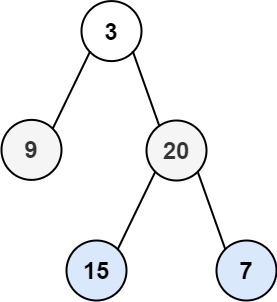

- 2.13. 1-D DP

- 2.13.1. General Notes

- 2.13.2. [93] Climbing Stairs (70)

- 2.13.3. [94] Min Cost Climbing Stairs (746) cost_reference rolling_2_var_method

- 2.13.4. [95] House Robber I (198)

- 2.13.5. [96] House Robber II (213) circular_dependencies number_of_subproblems

- 2.13.6. [97] ⭐️ Longest Palindromic Substring (5) 2D_DP Manachers_Algo

- 2.13.7. [98] Palindromic Substrings (647) 2D_DP

- 2.13.8. [99] Decode Ways (91) redo 1D_DP rolling_2_var_method

- 2.13.9. [100] Coin Change (322) DP recursive_top_down

- 2.13.10. [101] Maximum Product Subarray (152) redo rolling_2_var_method 1D_DP

- 2.13.11. [102] Word Break (139) 1D_DP 2_pointers

- 2.13.12. [103] Longest Increasing Subsequence (300) 1D_DP patience_tracking_algo

- 2.13.13. [104] ⭐️ ⭐ Partition Equal Subset Sum 1D_DP 0_1_subset_sum 0_1_knapsack knapsack counting_dp counting

- 2.13.14. [Depth Blind 1] Ones and Zeroes (474) failed 0_1_subset_sum knapsack

- 2.13.15. TODO [Depth-Blind 2] Tallest Billboard (956) failed balanced_partition knapsack

- 2.14. Intervals

- 2.14.1. General Notes

- 2.14.2. [105] Insert Interval (57)

- 2.14.3. [106] Merge Intervals (56)

- 2.14.4. [107] Non-Overlapping Intervals (435) redo greedy sort_end_times

- 2.14.5. [108] Meeting Rooms I (???)

- 2.14.6. [109] Meeting Rooms II (???)

- 2.14.7. [110] Minimum Interval to Include Each Query (1851) almost hard 2_pointers min_heap

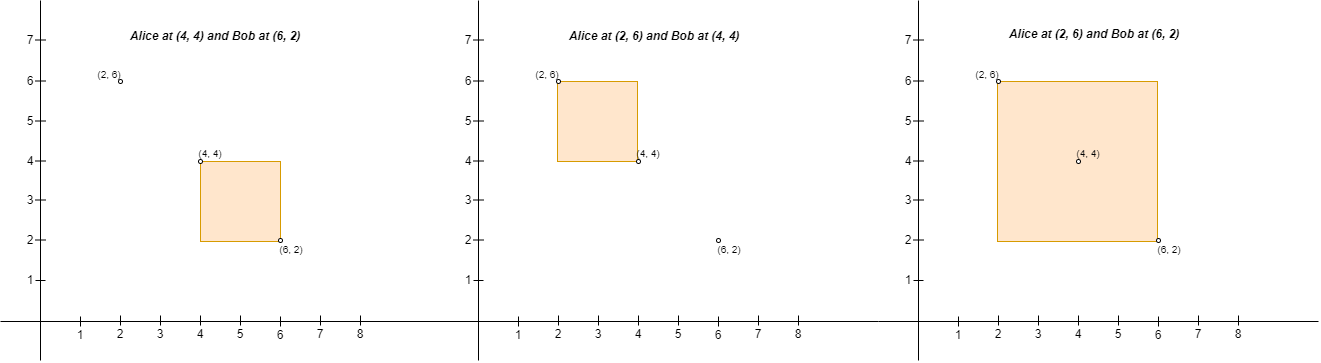

- 2.14.8. [exposure-1] Find the Number of Ways to Place People II (3027) hard sweepline

- 2.15. Greedy Algos

- 2.15.1. General Notes

- 2.15.2. [111] Maximum Subarray (53) greedy kadane_algorithm

- 2.15.3. [112] Jump Game (55) greedy

- 2.15.4. [113] Jump Game II (45) greedy rephrase_the_question

- 2.15.5. [114] Gas Station (134) redo greedy kadane_algorithm

- 2.15.6. [115] Hand of Straights (846) almost greedy frequency_counting

- 2.15.7. [116] Merge Triplets to Form Target Triplet (1899) almost element_wise_max coverage_check

- 2.15.8. ⭐️ [117] Partition Labels (763)

- 2.15.9. [118] Valid Parenthesis String (678) 2_stack greedy_range_counting

- 2.15.10. [Exposure-1] Minimum Number of People to Teach (1733) counting disguised_as_graph

- 2.16. Advanced Graphs

- 2.16.1. General Notes

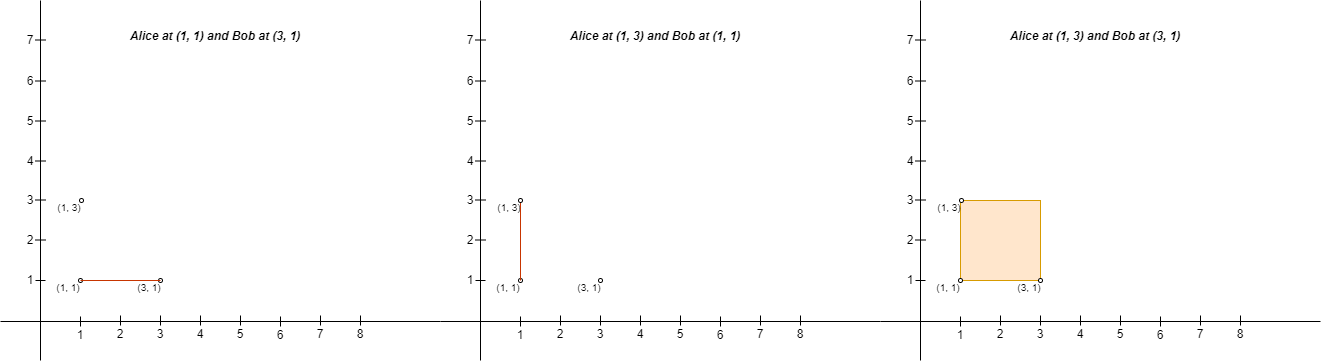

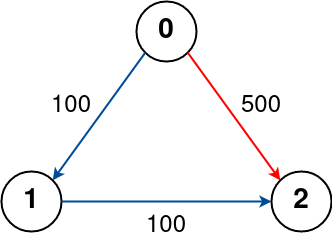

- 2.16.2. [119] Network Delay Time (743) dijkstra single_source_to_all_dest

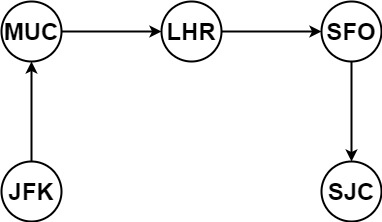

- 2.16.3. [120] ⭐️Reconstruct Itinerary (332) hard Eulerian_path excusemewhat Heirholzers_algo

- 2.16.4. [121] Min Cost to Connect All Points (1584) space_hacking lazy_hack lazy Prims_algo

- 2.16.5. [122] ⭐️ Swim in Rising Water (778) hard flood_fill dijkstra binary_search

- 2.16.6. [123] ⭐️ Alien Dictionary (269) redo tedius hard Kahns_algo topological_sort

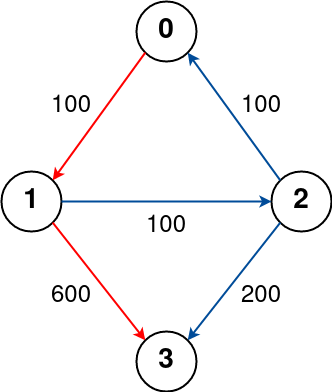

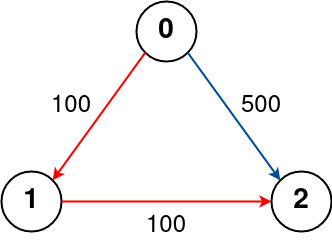

- 2.16.7. [124] Cheapest Flights Within K Stops (787) bellman_ford almost confounding_gotcha

- 2.16.8. [Depth-Blind] Sort Items by Groups Respecting Dependencies (1203) failed 2_phase_topological_sort topological_sorting

- 2.17. 2-D DP

- 2.17.1. General Notes

- 2.17.2. [125] Unique Paths (62) path_enum DP combinatorial_solution

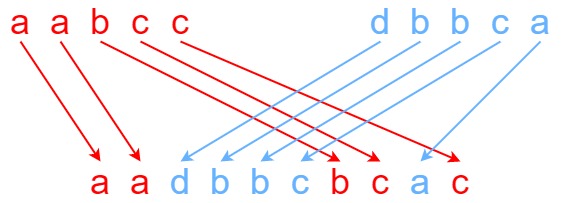

- 2.17.3. [126] Longest Common Subsequence (1143) classic string_dp prefix_tracking

- 2.17.4. [127] ⭐️ Best Time to Buy and Sell Stock with Cooldown (309) state_machine FSM_DP n_array_dp time_simulation snapshot_rolling_vars

- 2.17.5. [128] ⭐️⭐️ Coin Change II (518) redo 1D_DP combination_sum combinations unbounded_knapsack knapsack counting

- 2.17.6. [129] ⭐️⭐️ Target Sum (494) problem_reduction bounded_knapsack knapsack subset_sum 1D_DP backward_loop_trick

- 2.17.7. DONE [130] Interleaving String (97) interleaving rolling_1D_array

- 2.17.8. ⭐️ [132] Distinct Subsequences (115) hard subsequences dp

- 2.17.9. [133] Edit Distance (72) subsequences prefix_matching

- 2.17.10. [134] ⭐️ Burst Balloons (312) redo hard interval_DP divide_and_conquer think_in_reverse matmul_dp_pattern

- 2.17.11. DONE [135] Regular Expression Matching (10) hard regex_matching 2D_string_matching

- 2.17.12. [Depth Blind 1] Word Break II (140) hard failed

- 2.17.13. [Depth Blind 2] Number of Unique Good Subsequences (1987) hard failed counting 2_state_tracking

- 2.18. Bit Manipulation

- 2.18.1. General Notes

- 2.18.2. [136] Single Number (136) classic pair_cancellation_via_XOR

- 2.18.3. [137] Number of 1 Bits (191) hamming_weight popcount

- 2.18.4. [138] Counting Bits (338) DP popcount

- 2.18.5. [139] Reverse Bits (190) bit_reversal fixed_width

- 2.18.6. [140] Missing Number (268) pair_cancellation_via_XOR compare_with_idx

- 2.18.7. [141] ⭐️Sum of Two Integers (371) sum_without_operator fixed_width_integer

- 2.18.8. [142] ⭐️Reverse Integer (7) overflow_detection decimal_shift

- 2.19. Math & Geometry

- 2.19.1. General Notes

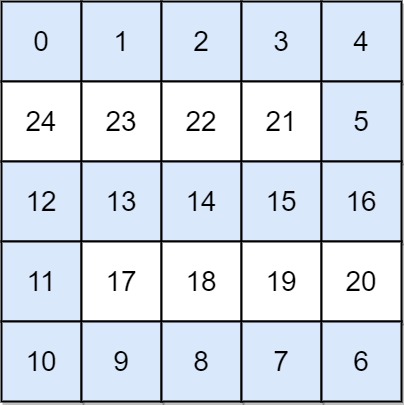

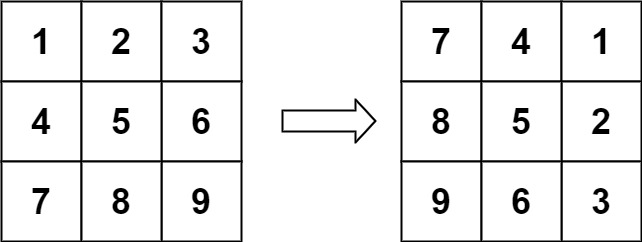

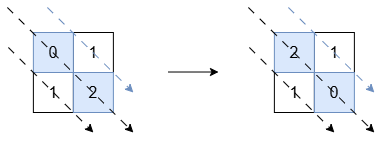

- 2.19.2. [143] Rotate Image (48) redo pointer_management matrix_rotation

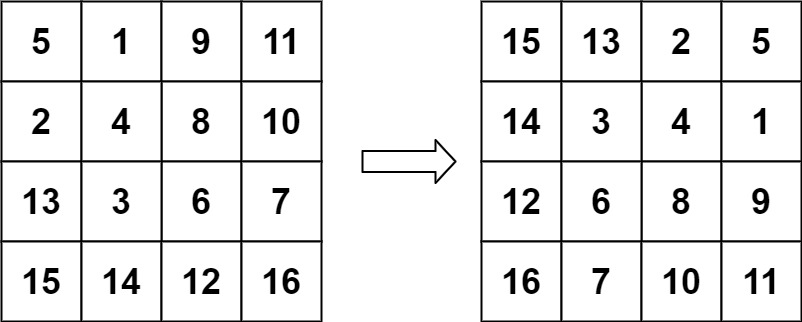

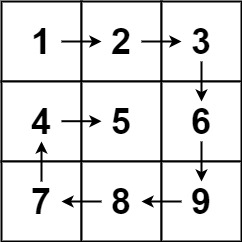

- 2.19.3. [144] Spiral Matrix (54) redo accuracy_problem pointer_management direction_simulation

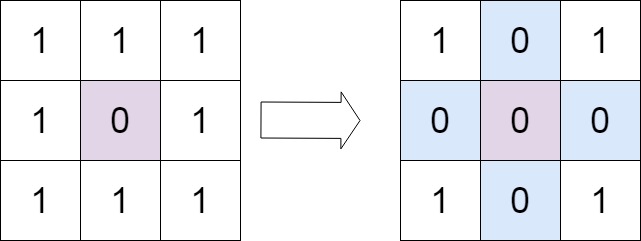

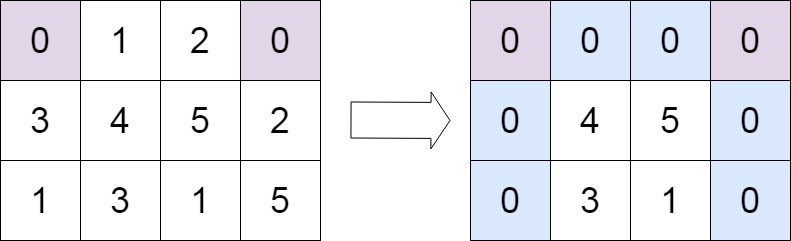

- 2.19.4. [145] Set Matrix Zeroes (73) in_place_markers

- 2.19.5. [146] Happy Number (202) tortoise_hare_method integer_division_vs_float_division test_for_loop

- 2.19.6. [147] Plus One (66) carry_propagation in_place

- 2.19.7. [148] Pow(x, n) (50) redo fast_exponentiation binary_exponentiation classic

- 2.19.8. [149] ⭐️ Multiply Strings (43) multiplication_algo

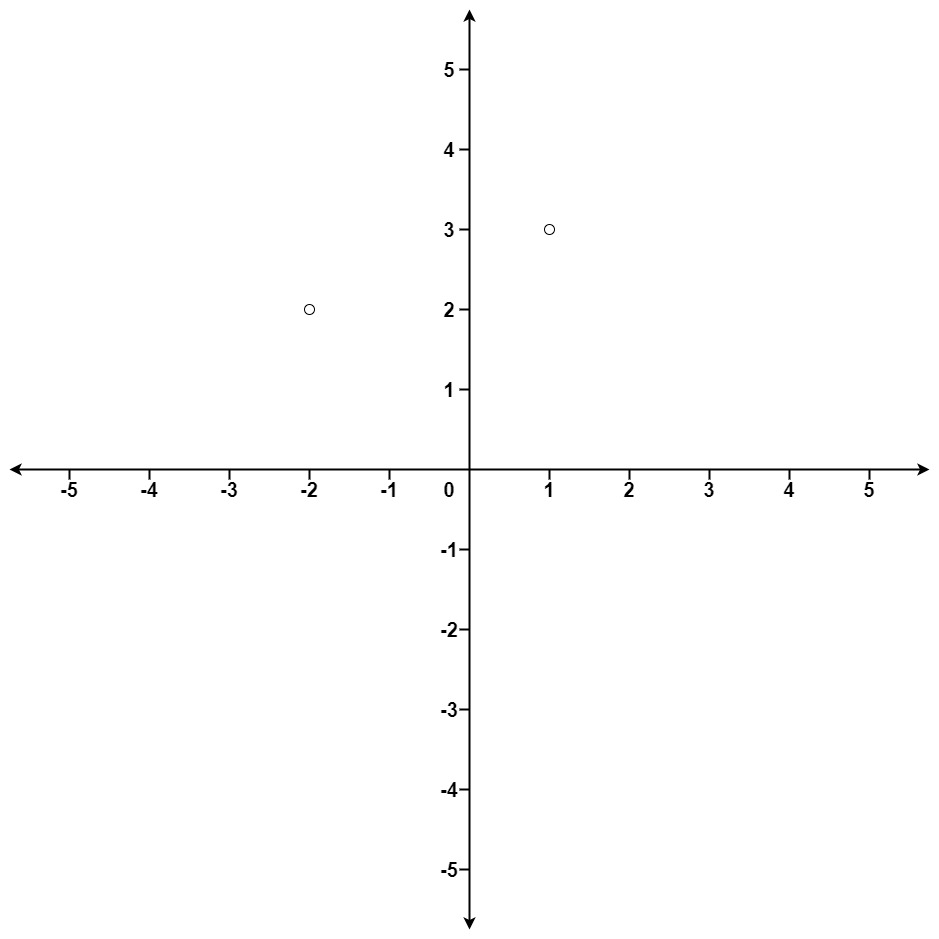

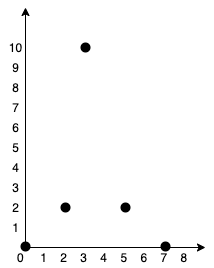

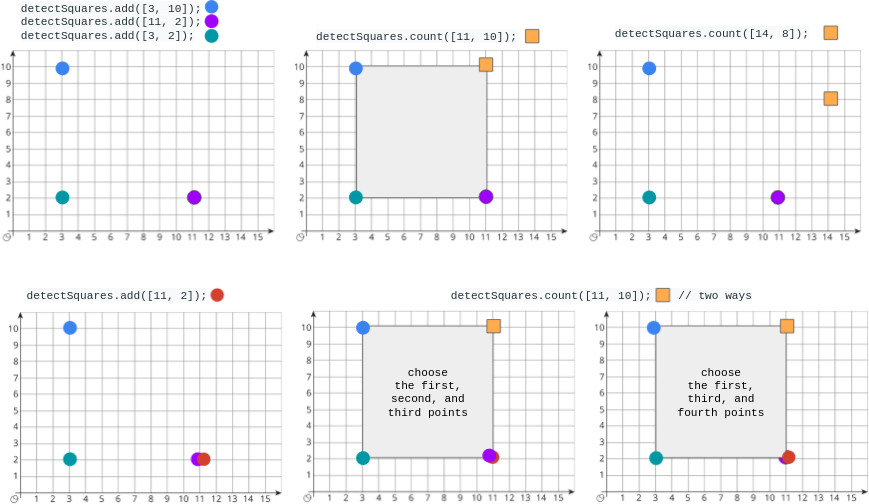

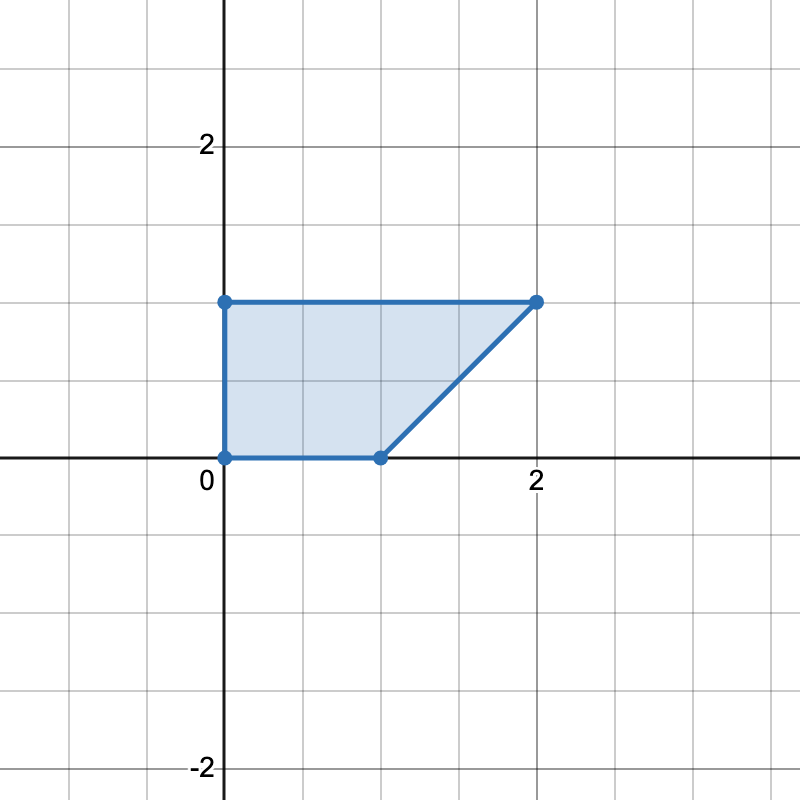

- 2.19.9. [150] Detect Squares (2013) cartesian_plane

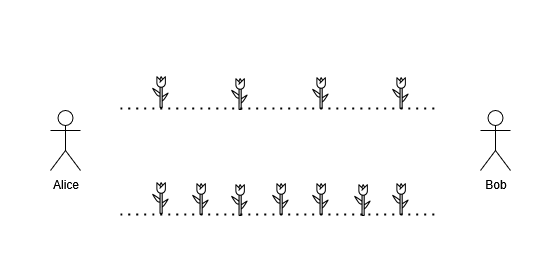

- 2.19.10. [exposure-1] Alice and Bob Playing Flower Game (3021) counting

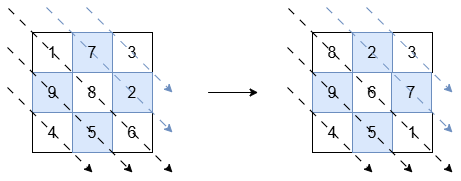

- 2.19.11. [exposure-2] Sort Matrix by Diagonals (3446)

- 2.19.12. [exposure-3] Walking Robot Simulation (874) simulation turtlebot

- 3. Depth Gains

- 4. Exposure Gains

- 5. Basic Grid 83 & Neetcode 150

- 5.1. Day 1

- 5.2. Day 2

- 5.3. Day 3

- 5.4. Day 4 [Restart]

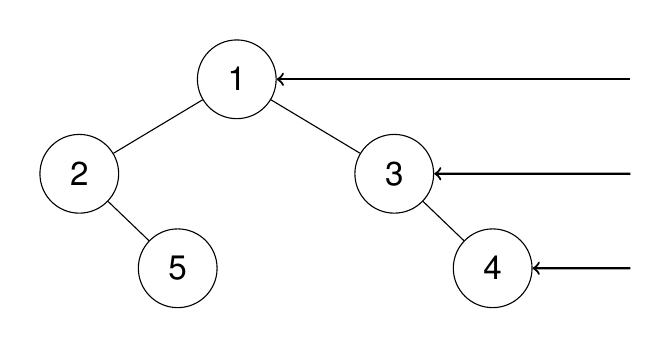

- 5.4.1. [8] Lowest Common Ancestor of a Binary Search Tree (235) topic_tree

- 5.4.2. [9] Balanced Binary Tree (110) tree

- 5.4.3. [10] Linked List Cycle (141) pointers tortoise_hare_method graph cycle_detection

- 5.4.4. [11] Implement Queue Using Stacks (232) queue stack

- 5.4.5. [12] First Bad Version (278)

- 5.5. Day 5

- 5.6. Day 6

- 5.7. Day 7

- 5.8. Day 8

- 5.9. Day 9

- 5.10. Day 10

- 5.11. Day 11

- 5.11.1. [34] permutations [46] med recursion

- 5.11.2. [35] merge intervals [56] med array

- 5.11.3. TODO [36] Lowest Common Ancestor of a Binary Tree [236]

- 5.11.4. [37] Time Based Key-Value Store [981] med binary_search

- 5.11.5. [38] Minimum Window Substring [76] hard string

- 5.11.6. [39] Reverse Linked List [206] easy linked_list

- 5.11.7. [40] Serialize and Deserialize Binary Tree [297] hard binary_tree

- 5.12. Day 12

- 5.13. Day 13

- 5.14. Day 13 (restart)

- 5.15. Day 14

- 5.16. Day 15 Back from India + Revision

- 5.17. Day 16

- 5.18. Day 17

- 5.19. Day 18

- 5.20. Day 19 Restart

- 5.21. Day 20

- 5.22. Day 21

- 5.23. Day 22

- 5.24. Day 23

- 5.25. Day 24

- 5.26. Day 25

- 5.27. Day 26 started neetcode 150 hashing arrays

- 6. Personal Python Recipes & Idioms

- 7. Notes

- 8. TODOs

- 9. References

※ 2. Tackling the Basics: Neetcode 150

This should give some breadth of understanding. For each category of questions, we shall spend some time learning about the key ideas behind that category and then dive into it. Then we can tackle the weaker topics to hone the intuition, the expanded Neetcode 250 list can be found here. Then we can tackle some hard questions. Then we can finally just do randomly to let the RNG-gods give us experience.

※ 2.1. Goals, Context and Retro

-—

The labuladong blog has a really good outline of this process, in which they encourage the exploration of patterns and learning about patterns in a quality-first approach as opposed to a quantity approach. They encourage people to develop a framework for common patterns so that pattern-matching becomes easier. It’s good to read it. Careful not to be too dogmatic about frameworks though, the important thing is to still get the intuition behind things, we never were good at memory-work anyway.

-—

The time reports for the sections are for doing, correcting and reviewing. Reviewing is roughly about 60-75% of the time taken.

In general, I’m targeting to reach 20 mins for mediums, less than 10 for easy, 30 mins for hards.

-—

Retro:

Now that I’ve finished this basic 150 questions, I have some learnings:

What has been good:

- I’m mostly able to get the intuition behind the questions or have an intuition for what the tricks are. It’s not really that difficult to grasp the basic concepts. The tricky ones come in typical patterns (e.g. tedium vs attention to detail that elucidates a non-obvious mathematical/logical property).

- My coding style is very clean and demonstrates the best parts about the language. So the implementation is usually performant, only possibly bottle-necked by algorithmic complexity.

- really grateful for my live website solution that is pleasant to use and assists me with the memory hacking. First thing I do when I’m up is think about what I did yesterday and what I will achieve today. Last thing I think of when I sleep is to review what I did today and what I will do tomorrow. I will be spartan about this. Motivation is high when things click easily. That’s the feeling to keep driving my confidence upwards.

What needs improvement:

- I haven’t been successfully able to build up incrementally to the Hard solutions. I believe that this is making me subconsciously think that the hard questions are just straight up pedantic / test for something only luck will help. I would like to NOT believe in that and find better ways to incrementally build up to optimal solutions. Honing my “Socratic thinking” would be a good way to do so. And also just straight up exposure to questions.

- I think implementation speed largely depends on how fast I come up with the v0.

- Entertaining the tests and focusing on the human aspects of face-to-face question solving. When I’m tired, I just think in my head. Additionally, the vocalisation is not efficient and it ends up distracting me sometimes. I’m usually a good teacher though, so I should probably speak in reaction to my thinking or realisation instead of multiplexing.

motivation management: spillovers have been demoralising. I think I need to do some emotional hacks and just always present the current situation as advantageous. I will have to identify the smallest to the biggest of wins for me.

Related to work-rate, I’ll just let the time-tracking guide me. When I realise that it’s only brainfog, then I need to allow myself to rest. Add variety in the activity.

How that affects the Depth Phase:

- I think my depth phase will have just two parallel goals:

personal topical weaknesses + revision of the neetcode 150 questions.

I’ll just be adding onto the topical notes at the top of every topic section, where I already have some notes.

The purpose here would be to distil the following from my existing notes from the neetcode 150 batch:

- boilerplate

- common tricks that I haven’t consolidated yet

- pitfalls: logical, accuracy / implementation.

generic topical weaknesses that usually comes up for most people.

These are the topics that people generally find difficult and which are over-represented in interviews.

- advanced graphs

- DP

- I will have to timebox the depth phase better.

※ 2.2. Arrays & Hashing

| Headline | Time | ||

|---|---|---|---|

| Total time | 3:11 | ||

| Arrays & Hashing | 3:11 | ||

| [1] Contains Duplicate (217) | 0:05 | ||

| [2] Valid Anagram (242) | 0:02 | ||

| [3] Two Sum (1) | 0:04 | ||

| [4] Group Anagrams (49) | 0:30 | ||

| [5] Top K Frequent Elements (347) | 0:28 | ||

| [7] Product of Array Except Self (238) | 0:38 | ||

| [8] Valid Sudoku (36) | 0:52 | ||

| [9] Longest Consecutive Sequence (128) | 0:32 |

※ 2.2.1. [1] Contains Duplicate (217)

Given an integer array nums, return true if any value appears at

least twice in the array, and return false if every element is

distinct.

Example 1:

Input: nums = [1,2,3,1]

Output: true

Explanation:

The element 1 occurs at the indices 0 and 3.

Example 2:

Input: nums = [1,2,3,4]

Output: false

Explanation:

All elements are distinct.

Example 3:

Input: nums = [1,1,1,3,3,4,3,2,4,2]

Output: true

Constraints:

1 <nums.length <= 10=5-10=^{=9}= <= nums[i] <= 10=9

※ 2.2.1.1. Constraints and Edge Cases

Nothing out of the ordinary here, there’s no indication of needing to handle integer overflow cases or to do any type changes.

※ 2.2.1.2. My Solution (Code)

1: class Solution: 2: │ def containsDuplicate(self, nums: List[int]) -> bool: 3: │ │ return len(set(nums)) != len(nums)

※ 2.2.1.2.1. Improved version

1: def containsDuplicate(self, nums: List[int]) -> bool: 2: │ seen = set() 3: │ for num in nums: 4: │ │ if num in seen: 5: │ │ │ return True 6: │ │ seen.add(num) 7: │ return False

This just early-exits but the asymptotic performance is the same (linear time and linear space).

※ 2.2.1.3. My Approach/Explanation

Since it’s a member duplicates check and they’re only looking for bool output, can just resort to anything that is a default stdlib function.

Size checks make sense and the only problem I can fault this for is that it requires a duplication in memory because of the creation of the set.

The size checks via len should be fast because it’s a struct-value check.

※ 2.2.1.4. My Learnings/Questions

- I should spend a little bit longer trying to entertain the edge cases if it’s an in-person thing and someone is evaluating me for my thought process. If it’s a bot, then it’s alright I can just send as I wish.

- It doesn’t have an early-exit pattern

※ 2.2.2. [2] Valid Anagram (242)

Given two strings s and t, return true if t is an anagram of

s, and false otherwise.

Example 1:

Input: s = “anagram”, t = “nagaram”

Output: true

Example 2:

Input: s = “rat”, t = “car”

Output: false

Constraints:

1 <s.length, t.length <= 5 * 10=4sandtconsist of lowercase English letters.

Follow up: What if the inputs contain Unicode characters? How would you adapt your solution to such a case?

※ 2.2.2.1. Constraints and Edge Cases

- didn’t spend much time looking into this

※ 2.2.2.2. My Solution (Code)

1: from collections import Counter 2: 3: class Solution: 4: │ def isAnagram(self, s: str, t: str) -> bool: 5: │ │ if (len(s) != len(t)): 6: │ │ │ return False 7: │ │ │ 8: │ │ return Counter(s) == Counter(t)

- the length check is an early return that makes things fast

※ 2.2.2.2.1. Improved solution

Because the constraint given is limited to the english language letters, we know that the charset is fixed (26char). Therefore, it’s even faster to just do fixed length char arrays and then do the counting:

1: class Solution: 2: │ def isAnagram(self, s: str, t: str) -> bool: 3: │ │ if len(s) != len(t): 4: │ │ │ return False 5: │ │ count = [0] * 26 6: │ │ for c1, c2 in zip(s, t): 7: │ │ │ count[ord(c1) - ord('a')] += 1 8: │ │ │ count[ord(c2) - ord('a')] -= 1 9: │ │ return all(x == 0 for x in count) 10:

※ 2.2.2.3. My Approach/Explanation

- Character counting works great if we use

collections::Counterwhich gives a refmap of the characters and their counts - The choice of DS is whether the charspace is fixed or not

- Counter works with a hashtable under the hood.

※ 2.2.2.4. My Learnings/Questions

Question: will Counter() handle different encodings well?

Answer: Yes: Counter works with any hashable object, including Unicode characters, so it will count any character in the input string, regardless of encoding

Caveat: If you want to support Unicode, your code doesn’t need to change, but using a fixed-size array for counting would not work; you must use a hash table (Counter or dict).

- Other things that Counter can be used for

- in the realm of character counting there are other approaches that might make sense:

- if the charset is known then can use a fixed array representing the charset might make sense. than can just count based on that fixed idx Datastructures for character counting:

- Using a fixed-size array for counting is the most space-efficient for lowercase English letters, as it avoids hash table overhead and is always O(1) space

- Sorting both strings and comparing them is another common approach, but it is O(nlogn)O(nlogn) and thus less efficient than counting.

For efficiently counting character frequencies, several data structures can be considered, each with its own advantages: 1. **Hash Table (Dictionary in Python)**: │ - **Time Complexity**: O(n) for counting frequencies, where n is the number of characters. │ - **Space Complexity**: O(k), where k is the number of distinct characters. │ - **Use Case**: Ideal for counting frequencies of characters or words, as it allows for constant-time complexity for insertions and lookups. This is especially useful for large datasets where you need to maintain a count of each unique character or word[2][4]. │ 2. **Counter from `collections` Module**: │ - **Time Complexity**: O(n). │ - **Space Complexity**: O(k). │ - **Use Case**: A specialized dictionary for counting hashable objects. It provides a clean and efficient way to count frequencies and is highly optimized for this purpose. It simplifies the code and is recommended for most frequency counting tasks in Python[6]. │ 3. **List (for Fixed-Size Character Sets)**: │ - **Time Complexity**: O(n). │ - **Space Complexity**: O(1) if the character set size is fixed (e.g., ASCII or lowercase English letters). │ - **Use Case**: Suitable for counting frequencies of characters when the character set is limited and known in advance. This approach uses a fixed-size list indexed by character codes, making it very efficient in terms of both time and space[2]. │ 4. **Counting Sort**: │ - **Time Complexity**: O(n + k), where k is the range of the input. │ - **Space Complexity**: O(k). │ - **Use Case**: Useful when you need to sort data based on frequency counts. It is efficient for small ranges of input values and when sorting is required based on frequency[7]. │ ### Summary For most general-purpose applications, using a `Counter` from Python's `collections` module is recommended due to its simplicity and efficiency. If you are dealing with a fixed character set, using a list to count frequencies can be slightly more efficient. For scenarios involving sorting based on frequency, counting sort can be a good choice. Citations: [1] https://stackoverflow.com/questions/74344201/appropriate-data-structure-for-counting-frequency-of-string [2] https://www.geeksforgeeks.org/counting-frequencies-of-array-elements/ [3] https://discourse.julialang.org/t/how-to-count-all-unique-character-frequency-in-a-string/19342 [4] https://users.rust-lang.org/t/efficient-string-hashmaps-for-a-frequency-count/7752 [5] https://www.sololearn.com/en/Discuss/2705816/letter-frequency-on-python-data-structures [6] https://towardsdatascience.com/3-ways-to-count-the-item-frequencies-in-a-python-list-89975f118899?gi=dcc78c14cf09 [7] https://www.geeksforgeeks.org/counting-sort/ [8] https://docs.python.org/uk/3/tutorial/datastructures.html

※ 2.2.2.4.1. Other uses of Counter

Python’s **collections.Counter** is a specialized subclass of the built-in dictionary designed for counting hashable objects. It works by storing elements as dictionary keys and their counts as values, making it easy to tally occurrences of items in iterables or mappings[4][2][5]. **How Counter Works:** - **Initialization:** You can create a Counter in several ways: │ - From an iterable (like a list or string): │ ```python │ from collections import Counter │ c = Counter(['a', 'b', 'a', 'c', 'b', 'a']) │ # Counter({'a': 3, 'b': 2, 'c': 1}) │ ``` │ - From a mapping (dictionary): │ ```python │ c = Counter({'red': 4, 'blue': 2}) │ # Counter({'red': 4, 'blue': 2}) │ ``` │ - Using keyword arguments: │ ```python │ c = Counter(red=4, blue=2) │ # Counter({'red': 4, 'blue': 2}) │ ``` │ - **Behavior:** │ - Accessing a missing key returns `0` instead of raising a KeyError[4][5]. │ - Counts can be positive, zero, or negative integers[2][5]. │ - Setting a count to zero does not remove the key; use `del` to remove it entirely[4]. │ - **Common Methods:** │ - `.elements()`: Returns an iterator over elements, repeating each as many times as its count (ignores counts less than one)[5][3]. │ - `.most_common([n])`: Returns a list of the `n` most common elements and their counts, sorted from most to least common. If `n` is omitted, returns all elements[5][3][8]. │ - `.subtract(iterable_or_mapping)`: Subtracts counts from another iterable or mapping; counts can go negative[5][3]. │ - `.update(iterable_or_mapping)`: Adds counts from another iterable or mapping[5]. │ - `.total()`: Returns the sum of all counts[5]. │ - **Arithmetic and Set Operations:** │ Counter supports addition, subtraction, intersection (`&`), and union (`|`) operations, which combine counts in intuitive ways[7]. │ **Usage Example:** ```python from collections import Counter words = ['apple', 'banana', 'apple', 'orange', 'banana', 'apple'] counter = Counter(words) print(counter) # Output: Counter({'apple': 3, 'banana': 2, 'orange': 1}) print(counter.most_common(1)) # Output: [('apple', 3)] ``` **Summary Table:** | Feature | Description | |-----------------|-----------------------------------------------------| | Data structure | Subclass of dict; keys are elements, values are counts | | Initialization | From iterable, mapping, or keyword arguments | | Missing keys | Return 0 instead of KeyError | | Methods | elements(), most_common(), subtract(), update(), total() | | Supports | Arithmetic and set operations | Counter is ideal for quickly counting occurrences and finding the most common elements in a collection[4][5][8]. [1] https://realpython.com/python-counter/ [2] https://www.digitalocean.com/community/tutorials/python-counter-python-collections-counter [3] https://www.geeksforgeeks.org/python/counters-in-python-set-1/ [4] https://docs.python.org/3/library/collections.html [5] https://www.codecademy.com/resources/docs/python/collections-module/Counter [6] https://note.nkmk.me/en/python-collections-counter/ [7] https://pymotw.com/2/collections/counter.html [8] https://www.pythonmorsels.com/using-counter/ [9] https://stackoverflow.com/questions/13311094/counter-in-collections-module-python [10] https://www.youtube.com/watch?v=Lmsz6h25yiA

※ 2.2.3. [3] Two Sum (1)

Given an array of integers nums and an integer target, return

indices of the two numbers such that they add up to target.

You may assume that each input would have exactly one solution, and you may not use the same element twice.

You can return the answer in any order.

Example 1:

Input: nums = [2,7,11,15], target = 9 Output: [0,1] Explanation: Because nums[0] + nums[1] == 9, we return [0, 1].

Example 2:

Input: nums = [3,2,4], target = 6 Output: [1,2]

Example 3:

Input: nums = [3,3], target = 6 Output: [0,1]

Constraints:

2 <nums.length <= 10=4-10=^{=9}= <= nums[i] <= 10=9-10=^{=9}= <= target <= 10=9- Only one valid answer exists.

*Follow-up: *Can you come up with an algorithm that is less than

O(n=^{=2}=)= time complexity?

※ 2.2.3.1. Constraints and Edge Cases

No specific time constraint given, good if less than O(n2).

※ 2.2.3.2. My Solution (Code)

1: from collections import defaultdict 2: 3: class Solution: 4: │ def twoSum(self, nums: List[int], target: int) -> List[int]: 5: │ │ # refmap: indices itself 6: │ │ refmap = defaultdict(bool) 7: │ │ for idx, num in enumerate(nums): 8: │ │ │ complement = target - num 9: │ │ │ if (complement in refmap): 10: │ │ │ │ return [idx, refmap[complement]] 11: │ │ │ else: 12: │ │ │ │ refmap[num] = idx 13: │ │ │ │ 14: │ │ # no fallthrough, ans is guaranteed

So Kinda did a small oopsie, the defaultdict not really necessary, we could have just used a dict.

Even clearer solution:

1: class Solution: 2: │ def twoSum(self, nums: List[int], target: int) -> List[int]: 3: │ │ num_to_index = {} 4: │ │ for idx, num in enumerate(nums): 5: │ │ │ complement = target - num 6: │ │ │ if complement in num_to_index: 7: │ │ │ │ return [num_to_index[complement], idx] 8: │ │ │ num_to_index[num] = idx

- Time Complexity:

- Each lookup and insertion in the dictionary is \(O(1)\) on average.

- You traverse the list once: \(O(n)\) time.

- Space Complexity:

- In the worst case, you store every number in the dictionary: \(O(n)\) space.

※ 2.2.3.3. My Approach/Explanation

Classic question.

This time I ensured to use collections::defaultdict to make things easy.

No new discoveries as I did this yet.

This should be the most efficient in terms of space (since we return early as we build a dict) and time. It should be faster than \(O(n^2)\) because we only do a single pass and make reference to a visited collection. So runtime and space should be linear here.

※ 2.2.3.4. My Learnings/Questions

The refmap can be better named to include information of what is held, although that feels more like a nitpick.

※ 2.2.3.5. GPT analysis on this

Certainly! Here’s a detailed evaluation of your Two Sum solution: ## 1. **Correctness** Your solution is correct and will always return the indices of the two numbers that sum to the target, as guaranteed by the problem statement. - **Logic:** │ - You iterate through the list, for each number, compute its complement (`target - num`). │ - If the complement is in the reference map (`refmap`), you return the indices. │ - Otherwise, you add the current number and its index to the map. - **Edge Cases:** │ - Handles negative numbers, zeros, and duplicates correctly. │ - No fallthrough needed, since the problem guarantees exactly one solution. │ ## 2. **Time and Space Complexity** - **Time Complexity:** │ - Each lookup and insertion in the dictionary is O(1) on average. │ - You traverse the list once: **O(n)** time. │ - **Space Complexity:** │ - In the worst case, you store every number in the dictionary: **O(n)** space. │ ## 3. **Improvements in Code Style, Efficiency, or Clarity** - **Use of defaultdict:** │ - You use `defaultdict(bool)`, but a plain `{}` (dict) is sufficient. │ - `defaultdict(bool)` will default missing keys to `False`, but you’re storing indices (integers), not booleans. │ - This can lead to subtle bugs if a number is `0` (since `0 == False`). │ - **Order of indices:** │ - You return `[idx, refmap[complement]]`, but the problem expects indices of the two numbers. Usually, it’s `[refmap[complement], idx]` (first occurrence, then current). │ - **Variable naming:** │ - `refmap` could be called `num_to_index` for clarity. │ - **Class and method naming:** │ - Use `Solution` (capital S) and proper typing. │ ## 4. **Comparison to the Optimal Solution** - Your approach is the optimal one: single-pass hash map. - Sorting + two pointers is O(n log n) and loses the indices. - Brute force is O(n²). ## 5. **Sample Optimal Solution (Pythonic Version)** ```python class Solution: │ def twoSum(self, nums: List[int], target: int) -> List[int]: │ │ num_to_index = {} │ │ for idx, num in enumerate(nums): │ │ │ complement = target - num │ │ │ if complement in num_to_index: │ │ │ │ return [num_to_index[complement], idx] │ │ │ num_to_index[num] = idx ``` - This is the canonical, most Pythonic and clear version. ## 6. **Pythonic Improvements** - Use a regular dictionary instead of `defaultdict`. - Return indices in the correct order. - Use descriptive variable names. - Use capitalized class names (`Solution`). ## 7. **Your Specific Questions** You didn’t list any, but: - **Alternative algorithms:** For this problem, the hash map approach is best. No need for sorting or brute force. - **Why not defaultdict:** Because you’re not using the default value; you’re explicitly setting the index for each number. ## **Summary Table** | Approach | Time Complexity | Space Complexity | Early Exit | Pythonic | |--------------------|----------------|------------------|------------|----------| | Hash map (yours) | O(n) | O(n) | Yes | Mostly | | Brute force | O(n²) | O(1) | No | No | | Sorting + 2 ptrs | O(n log n) | O(n) | No | No | ## **Final Notes** - Your logic is spot-on; just use a regular dict and return indices in the expected order. - This is the industry-standard solution for Two Sum. Let me know if you want to discuss edge cases, variants, or other algorithmic approaches! [1] https://leetcode.com/problems/two-sum/

※ 2.2.4. [4] Group Anagrams (49)

Given an array of strings strs, group the anagrams together. You can

return the answer in any order.

Example 1:

Input: strs = [“eat”,“tea”,“tan”,“ate”,“nat”,“bat”]

Output: [[“bat”],[“nat”,“tan”],[“ate”,“eat”,“tea”]]

Explanation:

- There is no string in strs that can be rearranged to form

"bat". - The strings

"nat"and"tan"are anagrams as they can be rearranged to form each other. - The strings

"ate","eat", and"tea"are anagrams as they can be rearranged to form each other.

Example 2:

Input: strs = [“”]

Output: [[""]]

Example 3:

Input: strs = [“a”]

Output: [["a"]]

Constraints:

1 <strs.length <= 10=40 <strs[i].length <= 100=strs[i]consists of lowercase English letters.

※ 2.2.4.1. Constraints and Edge Cases

- nothing out of the ordinary for this, just had to make sure the empty string was handled well.

※ 2.2.4.2. My Solution (Code)

1: from collections import defaultdict 2: class Solution: 3: │ def groupAnagrams(self, strs: List[str]) -> List[List[str]]: 4: │ │ counter_to_group = defaultdict(list) 5: │ │ for elem in strs: 6: │ │ │ counter = [0] * 26 7: │ │ │ for letter in elem: 8: │ │ │ │ idx = ord(letter) - ord('a') 9: │ │ │ │ counter[idx] += 1 10: │ │ │ key = tuple(counter) 11: │ │ │ counter_to_group[key].append(elem) 12: │ │ │ 13: │ │ return list(counter_to_group.values())

※ 2.2.4.2.1. Character Counting: My own optimisation for longer strings

1: from collections import defaultdict 2: 3: class Solution: 4: │ def groupAnagrams(self, strs: List[str]) -> List[List[str]]: 5: │ │ groups = defaultdict(list) 6: │ │ for s in strs: 7: │ │ │ count = [0] * 26 8: │ │ │ for c in s: 9: │ │ │ │ count[ord(c) - ord('a')] += 1 10: │ │ │ groups[tuple(count)].append(s) 11: │ │ return list(groups.values())

This is good for long strings.

Time: For each string of length k, you count letters in O(k). For n strings, total time is O(N*K), where N = number of strings, K = average string length

Space: O(N*K) for storing all strings and their groupings. O(N) for the dictionary keys (one per unique anagram group). so in total O(NK)

※ 2.2.4.2.2. Sorted Key: An older, faster solution for shorter strings

This uses a sorting approach because two anagrams, sorted will give the same output. Strings are fixed regions of memory and hence hashable, so the dictionary building can use sorted strings for comparison.

This particular solution is faster than what I attempted:

1: from collections import defaultdict 2: class Solution: 3: │ def groupAnagrams(self, strs: List[str]) -> List[List[str]]: 4: │ │ refmap = defaultdict(list) 5: │ │ for val in strs: 6: │ │ │ common = "".join(sorted(val)) 7: │ │ │ refmap[common].append(val) 8: │ │ │ 9: │ │ return list(refmap.values())

Time:

- (K log K) per string, so total O(N*K log K).

Space:

- O(NK) similar

※ 2.2.4.3. My Approach/Explanation

- I treated it as a counting task yet again but in this case I realise that the overuse of stdlib functionality can be costly. So my initial solution was too slow compared to the population and I had to try make it better.

※ 2.2.4.4. My Learnings/Questions

- Anagram checks can be derived based on thinking about what they are. Anagrams ==> permutations of the same collection of characters ==> sorting can make two anagrams equal ==> we can use this to do checks

- Time complexities.

my improved solution : Time Complexity: For each string of length k, you count letters in O(k). For n strings, total time is O(N*K), where N = number of strings, K = average string length.

Space Complexity: O(N*K) for storing all strings and their groupings. O(N) for the dictionary keys (one per unique anagram group).

※ 2.2.5. [5] Top K Frequent Elements (347)

Given an integer array nums and an integer k, return the k most

frequent elements. You may return the answer in any order.

Example 1:

Input: nums = [1,1,1,2,2,3], k = 2 Output: [1,2]

Example 2:

Input: nums = [1], k = 1 Output: [1]

Constraints:

1 <nums.length <= 10=5-10=^{=4}= <= nums[i] <= 10=4kis in the range[1, the number of unique elements in the array].- It is guaranteed that the answer is unique.

Follow up: Your algorithm’s time complexity must be better than

O(n log n), where n is the array’s size.

※ 2.2.5.1. Constraints and Edge Cases

- Couldn’t find anything extraordinary for this

- Runtime must be better than O(n logn)

※ 2.2.5.2. My Solution (Code)

Counter based solution:

1: from collections import Counter 2: 3: class Solution: 4: │ def topKFrequent(self, nums: List[int], k: int) -> List[int]: 5: │ │ return [elem for [ elem, _ ] in Counter(nums).most_common(k)]

- Time: Counter(nums) is O(n). mostcommon(k) is O(n log k) if implemented with a heap, but in CPython, it actually sorts, so it’s O(n log n).

- Space: O(n) for the frequency map.

※ 2.2.5.2.1. Manual Counting + Sorting: My older, faster solution

1: from collections import defaultdict 2: 3: class Solution: 4: │ def topKFrequent(self, nums: List[int], k: int) -> List[int]: 5: │ │ freqmap = defaultdict(int) 6: │ │ # accumulate the freq map: 7: │ │ for num in nums: 8: │ │ │ freqmap[num] += 1 9: │ │ │ 10: │ │ # since sorted uses the first key in a nested collection for sorting 11: │ │ ref = sorted([(freq, num) for num, freq in freqmap.items()], reverse=True) 12: │ │ 13: │ │ return [v for _, v in ref[:k]]

- Time: O(n) to build the map, O(n log n) to sort the items.

- Space: O(n).

※ 2.2.5.2.2. Extension: Better than O(n log n): BucketSort or Heap

A bucket sort approach is better and runs on O(n) time:

1: from collections import Counter 2: 3: class Solution: 4: │ def topKFrequent(self, nums: List[int], k: int) -> List[int]: 5: │ │ count = Counter(nums) 6: │ │ # Bucket sort: index = frequency, value = list of numbers with that frequency 7: │ │ max_possible_frequency = len(nums) 8: │ │ buckets = [[] for _ in range(max_possible_frequency + 1)] 9: │ │ for num, freq in count.items(): 10: │ │ │ buckets[freq].append(num) 11: │ │ │ 12: │ │ # Flatten buckets from high to low frequency 13: │ │ res = [] 14: │ │ for freq in range(len(buckets) - 1, 0, -1): 15: │ │ │ for num in buckets[freq]: 16: │ │ │ │ res.append(num) 17: │ │ │ │ if len(res) == k: 18: │ │ │ │ │ return res 19:

A heap-based approach is O(n log k) where k is the char count

1: import heapq 2: from collections import Counter 3: 4: class Solution: 5: │ def topKFrequent(self, nums: List[int], k: int) -> List[int]: 6: │ │ count = Counter(nums) 7: │ │ return [item for item, _ in heapq.nlargest(k, count.items(), key=lambda x: x[1])]

※ 2.2.5.3. My Approach/Explanation

- Just adopted another counting approach since the counter has the ability to do mostcommon.

- Went for the first-reach, the non-lib approach would probably be similar, just with manual code for the counting part

※ 2.2.5.4. My Learnings/Questions

Bucket sort works perfectly for the frequency comparisons. The key problem via other methods is that we shouldn’t be sorting the individual frequencies like that Here’s the intuition behind things:

Show/Hide Md CodeHere’s an intuitive breakdown of **why bucket sort or a heap is better** than a full sort for the Top K Frequent Elements problem, and how you can arrive at these solutions: ## **Why Not Full Sort?** A naive approach is to: - Count frequencies (O(n)) - Sort all items by frequency (O(n log n)) - Take the top k But the problem asks for better than O(n log n) time. ## **Bucket Sort Intuition** ### **Key Insight** - **Frequencies are integers between 1 and n** (where n is the array length). - You don’t care about the exact order—just the k most frequent elements. ### **How Bucket Sort Works Here** 1. **Count frequencies** of each element (O(n)). 2. **Create buckets**: │ - Make an array of n+1 lists (buckets), where index i holds all elements that appear i times. 3. **Fill the buckets**: │ - For each number and its frequency, put it in the bucket at index = frequency. 4. **Collect results**: │ - Starting from the highest-frequency bucket, collect elements until you have k. │ ### **Why is this O(n)?** - Counting is O(n). - Filling buckets is O(n). - Collecting top k is O(n) (since at most n unique elements). - **No sorting needed!** │ This leverages the fact that the range of possible frequencies is small and bounded by n[3][4][6][7]. │ ## **Heap Intuition** ### **Key Insight** - You want the k largest frequencies, not a full sort. ### **How Heap Works Here** 1. **Count frequencies** of each element (O(n)). 2. **Use a min-heap of size k**: │ - For each unique element, push (frequency, element) into the heap. │ - If heap size exceeds k, pop the smallest. 3. **At the end, the heap contains the k most frequent elements**. ### **Why is this O(n log k)?** - Each insertion/popping from the heap is O(log k). - For n unique elements, total time is O(n log k). - Much better than O(n log n) if k is small compared to n[2][4][5][8]. ## **How to Arrive at These Solutions** ### **Bucket Sort:** - After counting, realize that the number of possible frequencies is at most n. - Instead of sorting, group elements by frequency directly. - This is a classic bucket sort scenario: small, dense range of keys (frequencies). ### **Heap:** - If you only need the k largest, a heap naturally supports this. - Using a min-heap of size k ensures you always keep the k most frequent seen so far. - This is a standard approach for "top k" problems. ## **Summary Table** | Approach | Time Complexity | When to Use | Intuition | |---------------|----------------|-------------------------------------|---------------------------------| | Full Sort | O(n log n) | Not optimal for large n | Sort everything | | Bucket Sort | O(n) | When range of keys (freq) is small | Group by freq, no sorting needed| | Heap (min-k) | O(n log k) | When k is much smaller than n | Only keep k largest | ### **References to Search Results** - Bucket sort leverages the bounded nature of frequencies for O(n) grouping[3][4][6][7]. - Heap approach is efficient for top-k selection, avoiding full sort[2][5][8]. If you want a more detailed code walk-through or further intuition, let me know! [1] https://www.geeksforgeeks.org/find-k-numbers-occurrences-given-array/feed/atom/ [2] https://www.youtube.com/watch?v=YPTqKIgVk-k [3] https://cs.stackexchange.com/questions/168505/top-k-most-frequent-elements-and-bucket-sorting-intuition [4] https://neetcode.io/solutions/top-k-frequent-elements [5] https://www.cnblogs.com/hygeia/p/5759512.html [6] https://leetcode.com/problems/top-k-frequent-elements/discuss/81602/Java-O(n)-Solution-Bucket-Sort [7] https://www.byteinthesky.com/tutorials/top-k-frequent-elements/ [8] https://stackoverflow.com/questions/67073941/top-k-frequent-elements-time-complexity-bucket-sort-vs-heap [9] https://algo.monster/liteproblems/347 [10] https://www.youtube.com/watch?v=SY26k8ZswPY

- Question: the constraint of “time complexity must be better than O(n logn)” – what is it supposed to hint?

Answer:Hint:

- You should avoid sorting all elements.

- Use a heap (O(n log k)) or bucket sort (O(n)) for optimal performance.

- Question: could I have used a accessor function when using the

sortedfunction to determine what to sort by? Answer: yes, the key parameter accepts a lambda function

※ 2.2.6. [6] Encode and Decode Strings [neetcode ref] [ref

See original solution here since it’s a locked question on leetcode, but not on neetcode.

※ 2.2.7. [7] Product of Array Except Self (238)

Given an integer array nums, return an array answer such that

answer[i] is equal to the product of all the elements of nums

except nums[i].

The product of any prefix or suffix of nums is guaranteed to fit in

a 32-bit integer.

You must write an algorithm that runs in O(n) time and without using

the division operation.

Example 1:

Input: nums = [1,2,3,4] Output: [24,12,8,6]

Example 2:

Input: nums = [-1,1,0,-3,3] Output: [0,0,9,0,0]

Constraints:

2 <nums.length <= 10=5-30 <nums[i] <= 30=- The input is generated such that

answer[i]is guaranteed to fit in a 32-bit integer.

Follow up: Can you solve the problem in O(1) extra space complexity?

(The output array does not count as extra space for space complexity

analysis.)

※ 2.2.7.1. Constraints and Edge Cases

- No integer overflows expected, min speed should be O(n).

- the number of passes don’t matter

- Stretch: Use O(1) space only

- we have to use the output array itself.

※ 2.2.7.2. My Solution (Code)

1: class Solution: 2: │ def productExceptSelf(self, nums: List[int]) -> List[int]: 3: │ │ output = [1] * len(nums) 4: │ │ # handle the prefixes first: 5: │ │ accum = 1 6: │ │ for idx in range(1, len(nums)): 7: │ │ │ prev_num = nums[idx - 1] 8: │ │ │ accum *= prev_num 9: │ │ │ output[idx] = accum 10: │ │ │ 11: │ │ # now handle the suffixes: 12: │ │ accum = 1 13: │ │ for idx in range(len(nums) - 2, -1, -1): 14: │ │ │ next_num = nums[idx + 1] 15: │ │ │ accum *= next_num 16: │ │ │ output[idx] *= accum 17: │ │ │ 18: │ │ return output

※ 2.2.7.2.1. A clearer solution

Here, we use a prefix and suffix temp vars instead of a single accum for extra clarity

1: class Solution: 2: │ def productExceptSelf(self, nums: List[int]) -> List[int]: 3: │ │ n = len(nums) 4: │ │ output = [1] * n 5: │ │ 6: │ │ # handle the prefixes first 7: │ │ prefix = 1 8: │ │ for i in range(n): 9: │ │ │ output[i] = prefix 10: │ │ │ prefix *= nums[i] 11: │ │ │ 12: │ │ # now handlethe suffixes 13: │ │ suffix = 1 14: │ │ for i in range(n - 1, -1, -1): 15: │ │ │ output[i] *= suffix 16: │ │ │ suffix *= nums[i] 17: │ │ │ 18: │ │ return output

※ 2.2.7.3. My Approach/Explanation

It was clear that the O(n) suggests that we should try a sweeping approach, do a prefix/suffix partial products and then calculate them together.

The O(1) space consideration was oddly phrased but the rough idea is that we can use the same output array that we intended to use for the result and in so doing we can keep it as O(1).

Intermediate results can be just kept in the array itself and a temporary variable can be used for the lookbacks or lookaheads.

※ 2.2.7.4. My Learnings/Questions

- I think I analysed this well, the intuition was there from the first time that I had done this.

- The reverse step needs to be understood for better intuition

so reversed should be

range(n - 1, -1, -1)where the start is inclusive indexn - 1, the end is exclusive index-1(so that 0 is counted) and the step is reversed-1. - Question: what can be faster than this? Answer: nothing. I’m blazingly fast already Nothing can be faster than O(n) for this problem, since you must look at every element at least once. The only possible “faster” would be reducing constant factors, but your code is already optimal in both time and space. Any approach using division (with special handling for zeros) is not allowed per the problem.

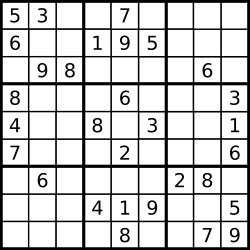

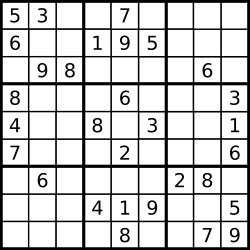

※ 2.2.8. [8] Valid Sudoku (36)

Determine if a 9 x 9 Sudoku board is valid. Only the filled cells need

to be validated according to the following rules:

- Each row must contain the digits

1-9without repetition. - Each column must contain the digits

1-9without repetition. - Each of the nine

3 x 3sub-boxes of the grid must contain the digits1-9without repetition.

Note:

- A Sudoku board (partially filled) could be valid but is not necessarily solvable.

- Only the filled cells need to be validated according to the mentioned rules.

Example 1:

Example 2:

Input: board = [["8","3",".",".","7",".",".",".","."] ,["6",".",".","1","9","5",".",".","."] ,[".","9","8",".",".",".",".","6","."] ,["8",".",".",".","6",".",".",".","3"] ,["4",".",".","8",".","3",".",".","1"] ,["7",".",".",".","2",".",".",".","6"] ,[".","6",".",".",".",".","2","8","."] ,[".",".",".","4","1","9",".",".","5"] ,[".",".",".",".","8",".",".","7","9"]] Output: false Explanation: Same as Example 1, except with the 5 in the top left corner being modified to 8. Since there are two 8's in the top left 3x3 sub-box, it is invalid.

Constraints:

board.length =9=board[i].length =9=board[i][j]is a digit1-9or'.'.

※ 2.2.8.1. Constraints and Edge Cases

- only some rules given, just have to evaluate ONLY them

- no need to care about empty slots

- just need to do repetition check

※ 2.2.8.2. My Solution (Code)

My solution just runs the 3 rules separately. If there are more rules, then each rule evaluation can be function-pointered and we could just add them as we wish. The code for the frequency check is the same regardless of slice.

Also this time I didn’t have much of a problem defining the slices because I have a better intuition for why the ranges are defined like they are.

1: class Solution: 2: │ def isValidSudoku(self, board: List[List[str]]) -> bool: 3: │ │ # handle the rows: 4: │ │ for row in board: 5: │ │ │ frequency_ref = [0] * 9 # index = ord edit distance 6: │ │ │ for val in row: 7: │ │ │ │ if (val == '.'): 8: │ │ │ │ │ continue 9: │ │ │ │ idx = ord(val) - ord('1') 10: │ │ │ │ has_duplicate = frequency_ref[idx] > 0 11: │ │ │ │ if (has_duplicate): 12: │ │ │ │ │ return False 13: │ │ │ │ frequency_ref[idx] += 1 14: │ │ │ │ 15: │ │ # handle the columns: 16: │ │ for col_idx in range(0, 9): 17: │ │ │ frequency_ref = [0] * 9 # index = ord edit distance 18: │ │ │ column = (row[col_idx] for row in board) 19: │ │ │ for val in column: 20: │ │ │ │ if (val == '.'): 21: │ │ │ │ │ continue 22: │ │ │ │ idx = ord(val) - ord('1') 23: │ │ │ │ has_duplicate = frequency_ref[idx] > 0 24: │ │ │ │ if (has_duplicate): 25: │ │ │ │ │ return False 26: │ │ │ │ frequency_ref[idx] += 1 27: │ │ │ │ 28: │ │ # handle sub_boxes: 29: │ │ top_left_corners = ((row_idx_start, col_idx_start) for col_idx_start in range(0, 9, 3) for row_idx_start in range(0, 9, 3)) 30: │ │ 31: │ │ for row_start, col_start in top_left_corners: 32: │ │ │ frequency_ref = [0] * 9 # index = ord edit distance 33: │ │ │ for val in (board[row_idx][col_idx] for row_idx in range(row_start, row_start + 3) for col_idx in range(col_start, col_start + 3)): 34: │ │ │ │ if (val == '.'): 35: │ │ │ │ │ continue 36: │ │ │ │ idx = ord(val) - ord('1') 37: │ │ │ │ has_duplicate = frequency_ref[idx] > 0 38: │ │ │ │ if (has_duplicate): 39: │ │ │ │ │ return False 40: │ │ │ │ frequency_ref[idx] += 1 41: │ │ │ │ 42: │ │ return True

※ 2.2.8.2.1. Ideal Solution: Single pass simultaneous checks

1: from collections import defaultdict 2: 3: class Solution: 4: │ def isValidSudoku(self, board: List[List[str]]) -> bool: 5: │ │ cols=defaultdict(set) 6: │ │ rows=defaultdict(set) 7: │ │ squares=defaultdict(set) 8: │ │ 9: │ │ for row_idx in range(9): 10: │ │ │ for col_idx in range(9): 11: │ │ │ │ cell = board[row_idx][col_idx] 12: │ │ │ │ if (cell == '.'): 13: │ │ │ │ │ continue 14: │ │ │ │ │ 15: │ │ │ │ is_duplicate_in_rows = cell in rows[row_idx] 16: │ │ │ │ if is_duplicate_in_rows: 17: │ │ │ │ │ return False 18: │ │ │ │ rows[row_idx].add(cell) 19: │ │ │ │ 20: │ │ │ │ is_duplicate_in_columns = cell in cols[col_idx] 21: │ │ │ │ if is_duplicate_in_columns: 22: │ │ │ │ │ return False 23: │ │ │ │ cols[col_idx].add(cell) 24: │ │ │ │ 25: │ │ │ │ # squares can be mapped using their top left corner coordinate as the key: 26: │ │ │ │ top_left_corner_coord = (row_idx // 3, col_idx // 3) 27: │ │ │ │ is_duplicate_in_squares = cell in squares[top_left_corner_coord] 28: │ │ │ │ if is_duplicate_in_squares: 29: │ │ │ │ │ return False 30: │ │ │ │ squares[top_left_corner_coord].add(cell) 31: │ │ │ │ 32: │ │ return True 33:

This is superior because: Explanation:

- Each cell is checked only once: Your approach checks each cell three times (row, column, box), while the single-pass approach checks each cell once and updates all three constraints in one go.

- Immediate feedback: If a violation is found, the function returns immediately, possibly before the rest of the board is checked.

- Cache locality: Fewer passes over the data means better use of CPU cache, which can matter for larger boards.

※ 2.2.8.3. My Approach/Explanation

- for the repetition check part, it’s clear that the fixed char space for 1 to 9 digits means that we can do a very efficient way of doing fixed-array frequency checks. Just use

ord(val) - ord(-1)to get the edit distance, which becomes the index in the array. The bot praised me “Your use of ord(val) - ord(‘1’) is clever for fixed-size arrays.” - I acknowledge that my approach has 3 readings per cell and this is technically wasteful

- the rest of it is a matter of being able to define python slices correctly.

※ 2.2.8.4. My Learnings/Questions

- the edit distance as a way of judging the index within the fixed sized frequency ref-array is pretty neat!

- The single-pass approach is better for n-dimensional. In this case, it’s tolerable because the board-size is fixed.

Question: One of the better solutions online looks like this but I don’t have an under-the-hood understanding of why that is significantly faster (other than avoiding the duplicate reads for each cell)

Show/Hide Python Code1: class Solution: 2: │ def isValidSudoku(self, board: List[List[str]]) -> bool: 3: │ │ cols=defaultdict(set) 4: │ │ rows=defaultdict(set) 5: │ │ squares=defaultdict(set) 6: │ │ for r in range(9): 7: │ │ │ for c in range(9): 8: │ │ │ │ if board[r][c]==".": 9: │ │ │ │ │ continue 10: │ │ │ │ if (board[r][c] in rows[r] or board[r][c] in cols[c] or board[r][c] in squares[(r//3,c//3)]): 11: │ │ │ │ │ return False 12: │ │ │ │ cols[c].add(board[r][c]) 13: │ │ │ │ rows[r].add(board[r][c]) 14: │ │ │ │ squares[(r//3,c//3)].add(board[r][c]) 15: │ │ return True

※ 2.2.8.5. GPT Critique

Here's a detailed review and analysis of your **Valid Sudoku** solution: ## 1. **Correctness** **Your solution is correct**: - It checks all three rules: rows, columns, and 3x3 sub-boxes, for duplicate digits (ignoring '.'). - The use of a fixed-size frequency array is appropriate since only digits 1-9 are valid. - Handles all edge cases, including empty cells and full/partial boards. ## 2. **Time and Space Complexity** - **Time Complexity:** │ - For each rule (row, column, sub-box), you scan all 81 cells: 3 × 81 = **O(1)** (since the board is always 9x9), but in general, **O(n²)** for an n×n board. │ - **However, each cell is checked three times** (once per rule), which is redundant but not problematic for a fixed-size board. │ - **Space Complexity:** │ - Uses a fixed-size frequency array of length 9 for each check: **O(1)** extra space. │ ## 3. **Improvements in Code Style, Efficiency, or Clarity** - **Clarity:** │ - Code is readable and well-commented. │ - The use of `ord(val) - ord('1')` is clever and efficient for mapping digits to indices. - **Efficiency:** │ - The main inefficiency is that each cell is visited three times (once per row, column, and sub-box). │ - For a 9x9 board, this is not a real issue, but for a generalized n×n board, a single-pass approach is preferable. - **Style:** │ - You could factor out the frequency check into a helper function to reduce code repetition. │ ## 4. **Comparison to the Optimal Solution** - **Optimal Solution:** │ - The single-pass approach (as in your referenced solution) checks all three constraints in one traversal of the board, visiting each cell only once. │ - This uses three data structures (sets or arrays) to track seen digits for each row, column, and sub-box. │ **Why is it faster?** - **Fewer reads:** Each cell is processed once, not three times. - **Immediate detection:** As soon as a duplicate is found in any rule, it returns `False` without unnecessary further checks. - **Better for larger boards:** For larger or dynamic boards, this approach scales better. ## 5. **Sample Optimal Solution (Single Pass, O(1) Space and Time)** ```python from collections import defaultdict class Solution: │ def isValidSudoku(self, board: List[List[str]]) -> bool: │ │ rows = defaultdict(set) │ │ cols = defaultdict(set) │ │ boxes = defaultdict(set) │ │ for r in range(9): │ │ │ for c in range(9): │ │ │ │ val = board[r][c] │ │ │ │ if val == ".": │ │ │ │ │ continue │ │ │ │ if (val in rows[r] or │ │ │ │ │ val in cols[c] or │ │ │ │ │ val in boxes[(r // 3, c // 3)]): │ │ │ │ │ return False │ │ │ │ rows[r].add(val) │ │ │ │ cols[c].add(val) │ │ │ │ boxes[(r // 3, c // 3)].add(val) │ │ return True ``` - **Time:** O(1) for 9x9, O(n²) for n×n. - **Space:** O(1) (since max 9 elements per set). ## 6. **Pythonic Improvements** - Use of `defaultdict(set)` is idiomatic and clear. - Could use list comprehensions or generator expressions for brevity, but clarity is more important here. - You could use arrays of sets instead of `defaultdict` (since indices are always 0–8), but `defaultdict` is fine and concise. ## 7. **Your Specific Questions** > Why is the single-pass solution with sets significantly faster? **Explanation:** - **Each cell is checked only once:** │ Your approach checks each cell three times (row, column, box), while the single-pass approach checks each cell once and updates all three constraints in one go. - **Immediate feedback:** │ If a violation is found, the function returns immediately, possibly before the rest of the board is checked. - **Cache locality:** │ Fewer passes over the data means better use of CPU cache, which can matter for larger boards. │ **In summary:** The single-pass solution is more efficient in both time and space for larger or dynamic boards, and is more elegant and maintainable. ## **Summary Table** | Approach | Time per cell | # of passes | Space per constraint | Early exit? | Pythonic | |------------------|--------------|-------------|----------------------|-------------|----------| | Your solution | O(1) | 3 | O(1) | Yes | Yes | | Single-pass sets | O(1) | 1 | O(1) | Yes | Yes | ## **Conclusion** - **Your solution is correct and efficient for 9x9 boards.** - **Single-pass set-based solutions are slightly more efficient and are considered optimal for this problem.** - Your use of `ord(val) - ord('1')` is clever for fixed-size arrays. - For Pythonic style and efficiency, consider the single-pass approach. Let me know if you want to see further variants or more in-depth analysis! [1] https://leetcode.com/problems/valid-sudoku/description/

※ 2.2.9. [9] Longest Consecutive Sequence (128) med sequence accumulation

Given an unsorted array of integers nums, return the length of the

longest consecutive elements sequence.

You must write an algorithm that runs in O(n) time.

Example 1:

Input: nums = [100,4,200,1,3,2] Output: 4 Explanation: The longest consecutive elements sequence is [1, 2, 3, 4]. Therefore its length is 4.

Example 2:

Input: nums = [0,3,7,2,5,8,4,6,0,1] Output: 9

Example 3:

Input: nums = [1,0,1,2] Output: 3

Constraints:

0 <nums.length <= 10=5-10=^{=9}= <= nums[i] <= 10=9

※ 2.2.9.1. Constraints and Edge Cases

- it must run in O(n) time, so no direct sorting or anything

- however, does this mean that we can sort groups as we accumulate groups? ANS: NO such thing

- initial array is unsorted

※ 2.2.9.2. My Solution

※ 2.2.9.2.1. Initial wrong approach:

This attempted to create ranges and tried to merge intervals. However, interval merging is a difficult thing to do. In the future, if we encounter any case where we have to choose to NOT merge intervals, we should take that.

1: class Solution: 2: │ def longestConsecutive(self, nums: List[int]) -> int: 3: │ │ ranges = [] 4: │ │ for num in nums: 5: │ │ │ # any matching? 6: │ │ │ for idx, (start, end_ex) in enumerate(ranges): 7: │ │ │ │ # check if within: 8: │ │ │ │ if (num >= start and num < end_ex): 9: │ │ │ │ │ continue 10: │ │ │ │ # check if can extend the existing range: 11: │ │ │ │ if (num + 1 == start): 12: │ │ │ │ │ ranges[idx] = (num, end_ex) 13: │ │ │ │ │ continue 14: │ │ │ │ │ 15: │ │ │ │ if (num == end_ex): 16: │ │ │ │ │ ranges[idx] = (start, num + 1) 17: │ │ │ │ │ continue 18: │ │ │ │ │ 19: │ │ │ │ ranges.append((num, num + 1)) 20: │ │ │ │ 21: │ │ desc_sorted_ranges = sorted(ranges, key=lambda x: x[1] - x[0]) 22: │ │ biggest_range = desc_sorted_ranges[-1] 23: │ │ 24: │ │ return biggest_range[1] - biggest_range[0]

※ 2.2.9.2.2. Guided Improvement

The key ideas:

- We pick the start values and KEEP trying to build onto it. It’s like finding a no-op ladder when doing Return-oriented programming approach to memory attacks. ==> only try to build sequences from the start of a sequence

- We just need to do membership checks quickly and using a set is the best idea here.

- Also it helps that we don’t need to return the longest set, we just need to return its length => hints at just needing an accumulator variable that we return as the result eventually

1: class Solution: 2: │ def longestConsecutive(self, nums: List[int]) -> int: 3: │ │ allSet = set(nums) 4: │ │ curr_longest = 0 5: │ │ for num in allSet: 6: │ │ │ is_start_of_seq = (num - 1) not in allSet 7: │ │ │ if is_start_of_seq: 8: │ │ │ │ # init the length: 9: │ │ │ │ length = 1 10: │ │ │ │ current = num 11: │ │ │ │ # try climbing the "ROP NOOPladder": 12: │ │ │ │ while can_continue_climbing:= (current + 1) in allSet: 13: │ │ │ │ │ current += 1 14: │ │ │ │ │ length += 1 15: │ │ │ │ │ 16: │ │ │ │ # so now we have an interval 17: │ │ │ │ curr_longest = max(curr_longest, length) 18: │ │ │ │ 19: │ │ return curr_longest

Another improvement is to avoid the use of the current variable. We can directly use the length variable to expand the ’interval’.

1: class Solution: 2: │ def longestConsecutive(self, nums: List[int]) -> int: 3: │ │ num_set = set(nums) 4: │ │ longest = 0 5: │ │ 6: │ │ for n in num_set: 7: │ │ │ if n - 1 not in num_set: 8: │ │ │ │ length = 1 9: │ │ │ │ 10: │ │ │ │ while n + length in num_set: 11: │ │ │ │ │ length += 1 12: │ │ │ │ │ 13: │ │ │ │ longest = max(longest, length) 14: │ │ │ │ 15: │ │ return longest

※ 2.2.9.3. My Approach/Explanation

Initial failed attempt:

- My approach was to define ranges/intervals using tuples.

iterate through the nums and each time there are 3 cases:

- The num is within an existing range

- the num is to the left extending of an existing range ==> we can replace the tuple with an updated range

- the num is to the right extending of an existing range ==> we can replace the tuple with an updated range Once I’ve tried to group up all the numbers, then I just need to check if any of the existing ranges can be merged with each other to create bigger super ranges. Like a cascade of sorts. however, I don’t know how to do this cascade of sorts

Final Insight:

- it’s really just a noop sled like that: The key insight is: You only need to start climbing from the “base” of a ladder (the minimal element of a sequence)—if you start anywhere else, you’re not at the true start, and you’ll be duplicating work.

※ 2.2.9.4. My Learnings/Questions

- Problems with original solution:

- it introduces the problem of needing to merge intervals and interval-merging intervals efficiently should be seen as a hard problem

- the inner loop run makes the overall thing O(n2) which violates the requirement that we are given that it needs to be done in O(n) time.

- Interval merging efficiently should be seen as a hard problem to solve

- Key Intuition:

The key insight is: You only need to start climbing from the “base” of a ladder (the minimal element of a sequence)—if you start anywhere else, you’re not at the true start, and you’ll be duplicating work.

- We can use a set for O(1) lookups, we just need to find the start of each sequence to see if

num - 1is NOT in the set - for each “start” we can count upwards until we no longer find consecutive numbers

- We can use a set for O(1) lookups, we just need to find the start of each sequence to see if

- More intuition aspects:

When you see “longest consecutive sequence” and an O(n) requirement, think:

- Can I use a set for O(1) lookups?

- Can I only start counting at the minimal element of a sequence? Avoid interval merging unless the problem is specifically about merging sorted intervals.

Extensions, more on interval merging:

Show/Hide Md CodeThe **most efficient and standard way to merge intervals** is as follows: ### **1. Sort the intervals by their start time** Sorting ensures that any overlapping intervals are adjacent, so you only need to compare each interval to the last merged one[1][2][3][5][6][7][9]. ### **2. Iterate and merge** - Initialize a result list with the first interval. - For each subsequent interval: │ - If it overlaps with the last interval in the result (i.e., its start is less than or equal to the end of the last merged interval), merge them by updating the end of the last interval to the maximum of both ends. │ - If it does not overlap, simply append it to the result list. │ ### **3. Time Complexity** - **Sorting:** O(n log n) - **Merging:** O(n) - **Total:** O(n log n) (dominated by the sorting step)[3][5][6][7]. #### **Python Example** ```python def merge(intervals): │ if not intervals: │ │ return [] │ # Step 1: Sort intervals by start time │ intervals.sort(key=lambda x: x[0]) │ merged = [intervals[0]] │ # Step 2: Iterate and merge │ for current in intervals[1:]: │ │ last = merged[-1] │ │ if current[0] <= last[1]: │ │ │ # Overlap: merge │ │ │ last[1] = max(last[1], current[1]) │ │ else: │ │ │ # No overlap: add new interval │ │ │ merged.append(current) │ return merged ``` ### **Why Not O(n)?** - The need to sort the intervals by start time is fundamental for efficient merging; without sorting, you’d need to check all pairs, which is O(n²)[3][5]. - There are advanced data structures (like interval trees or balanced BSTs) that can support dynamic merging in streaming scenarios, but for the classic merge-intervals problem, **O(n log n)** via sorting is optimal and standard[10]. ### **Summary Table** | Approach | Time Complexity | When to Use | |--------------------|----------------|----------------------------------------------| | Sort + Merge (std) | O(n log n) | Most general and efficient for static input | | Naive (nested) | O(n²) | Only for very small input or educational use | | Interval Tree | O(n log n) | For dynamic/streaming intervals | **In short:** **Sort by start time, then merge adjacent intervals in a single pass. This is the most efficient and standard approach for merging intervals.**[1][2][3][5][6] If you need to handle intervals in a streaming fashion (i.e., intervals arrive one at a time), an interval tree or balanced BST can be used for efficient dynamic merging, but that’s a more advanced scenario[10]. [1] https://algocademy.com/blog/merge-intervals-a-comprehensive-guide-to-solving-this-classic-algorithm-problem/ [2] https://www.architectalgos.com/mastering-merge-interval-patterns-a-guide-to-solving-interval-based-problems-1a14ef065876 [3] https://www.geeksforgeeks.org/dsa/merging-intervals/ [4] https://leetcode.com/problems/merge-intervals/ [5] https://www.educative.io/interview-prep/coding/solution-merge-intervals [6] https://algocademy.com/blog/mastering-merge-interval-problems-essential-techniques-for-coding-interviews/ [7] https://docs.vultr.com/problem-set/merge-intervals [8] https://www.w3resource.com/data-structures-and-algorithms/array/dsa-merge-intervals.php [9] https://blog.seancoughlin.me/mastering-the-merging-of-overlapping-intervals-in-python [10] https://stackoverflow.com/questions/4748184/how-to-efficiently-merge-int-ranges-in-a-stream

※ 2.2.9.5. GPT Guidance for Intuition

Here's a thorough evaluation of your **Longest Consecutive Sequence** solution and the concepts behind the optimal approach: ## 1. **Correctness of Your Solution** Your approach tries to build and extend ranges (intervals) as you iterate through `nums`, but: - **It is not correct** for several reasons: │ - You may create overlapping intervals that are never merged (e.g., `[1]` and `` should become `[1]`). │ - You may miss merging intervals that are adjacent after an extension. │ - The inner loop over all current ranges for every number makes it **O(n²)**, not O(n). │ - The logic for updating and merging intervals is incomplete and does not handle all cases. │ **Example of failure:** Given `[1][1]`, your code could create intervals like `[1]`, ``, `[1]` but never merges them, so it won't find the correct answer. ## 2. **Time and Space Complexity** - **Time Complexity:** │ - Your code is **O(n²)** in the worst case, since for each number you may iterate over all current ranges. - **Space Complexity:** │ - Up to O(n) for the `ranges` list. │ ## 3. **Improvements in Code Style, Efficiency, or Clarity** - **Clarity:** │ - The interval approach is not wrong in spirit, but merging intervals efficiently is hard without sorting or auxiliary structures. - **Efficiency:** │ - The O(n²) approach is not acceptable for large inputs. - **Code Style:** │ - Variable names are clear, but the logic is convoluted due to the merging issue. │ ## 4. **Comparison to the Optimal Solution** ### **Optimal Solution Intuition** - **Key Insight:** │ - If you use a set for O(1) lookups, you can find the start of each sequence by checking if `num - 1` is not in the set. │ - For every such "start", you can count upwards until you no longer find consecutive numbers. │ ### **Optimal Solution (O(n) time, O(n) space)** ```python class Solution: │ def longestConsecutive(self, nums: List[int]) -> int: │ │ num_set = set(nums) │ │ longest = 0 │ │ │ │ for num in num_set: │ │ │ # Only try to build sequences from the start of a sequence │ │ │ if num - 1 not in num_set: │ │ │ │ current = num │ │ │ │ length = 1 │ │ │ │ while current + 1 in num_set: │ │ │ │ │ current += 1 │ │ │ │ │ length += 1 │ │ │ │ longest = max(longest, length) │ │ │ │ │ │ return longest ``` - **Why is this O(n)?** │ - Each number is only visited once as the start of a sequence. │ - The inner `while` only runs across the length of each sequence, and all numbers are only processed once in total. │ ## 5. **Pythonic Improvements** - Use a set for O(1) lookups. - Avoid unnecessary sorting or interval merging. - Use clear variable names (`longest`, `current`, `length`). ## 6. **Your Specific Questions** ### **Q1: What makes this different from typical greedy solutions? How can I pattern-recognise better?** - **Difference:** │ - The greedy part is recognizing that you only need to start counting from the smallest number in a sequence (i.e., when `num - 1` is not in the set). │ - The pattern to recognize: │ - When asked for "longest consecutive sequence" in O(n), think about using a set for fast membership and only starting new sequences at the minimal elements. │ ### **Q2: What's wrong with my solution?** - **Main Issues:** │ - Inefficient (O(n²)) due to nested loops. │ - Fails to merge/extend intervals correctly. │ - Not scalable for large inputs. │ ## 7. **Summary Table** | Approach | Time Complexity | Space Complexity | Handles Merging? | O(n)? | Pythonic? | |-------------------|----------------|------------------|------------------|-------|-----------| | Your intervals | O(n²) | O(n) | ❌ | ❌ | Somewhat | | Set-based optimal | O(n) | O(n) | ✅ | ✅ | ✅ | ## **Conclusion** - Your approach is creative but not efficient or correct for all cases. - The optimal solution uses a set and only starts counting at sequence starts, resulting in O(n) time and O(n) space. - Pattern recognition: When O(n) is required for "consecutive" problems, consider using a set for O(1) lookups and avoid redundant work. **Let me know if you want to see more alternative approaches or have other questions!** [1] https://leetcode.com/problems/longest-consecutive-sequence/description/

※ 2.2.10. [Depth-1] Subarray Sum Equals K (560) prefix_sum

Given an array of integers nums and an integer k, return the total

number of subarrays whose sum equals to k.

A subarray is a contiguous non-empty sequence of elements within an array.

Example 1:

Input: nums = [1,1,1], k = 2 Output: 2

Example 2:

Input: nums = [1,2,3], k = 3 Output: 2

Constraints:

1 <nums.length <= 2 * 10=4-1000 <nums[i] <= 1000=-10=^{=7}= <= k <= 10=7

※ 2.2.10.1. Constraints and Edge Cases

- there’s negative numbers so this means that the same prefix sum value may appear more than once

※ 2.2.10.2. My Solution (Code)

※ 2.2.10.2.1. v0: flawed, almost, double counting

1: from collections import defaultdict 2: 3: class Solution: 4: │ def subarraySum(self, nums: List[int], k: int) -> int: 5: │ │ n = len(nums) 6: │ │ prefix_sums = [0] * (n + 1) 7: │ │ # because we have negative numbers, we can expect to have multiple values of the same prefix sum, at different indices 8: │ │ # prefix sums to count 9: │ │ ref = defaultdict(int) 10: │ │ ref[0] = 1 11: │ │ 12: │ │ # creates the prefix sums 13: │ │ for i in range(1, n + 1): 14: │ │ │ prefix_sum = nums[i - 1] + prefix_sums[i - 1] 15: │ │ │ prefix_sums[i] = prefix_sum 16: │ │ │ ref[prefix_sum] += 1 17: │ │ │ 18: │ │ counts = 0 19: │ │ 20: │ │ for i in range(1, n + 1): 21: │ │ │ curr = prefix_sums[i] 22: │ │ │ complement = curr - k 23: │ │ │ counts += ref[complement] 24: │ │ │ 25: │ │ return counts

this double counts: